Announcing the AEA RCT Registry’s new metadata repository

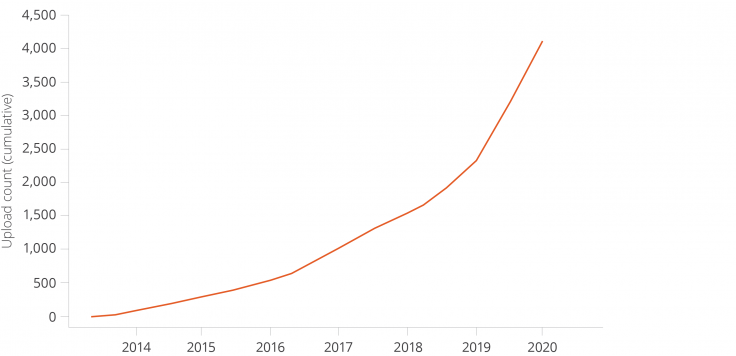

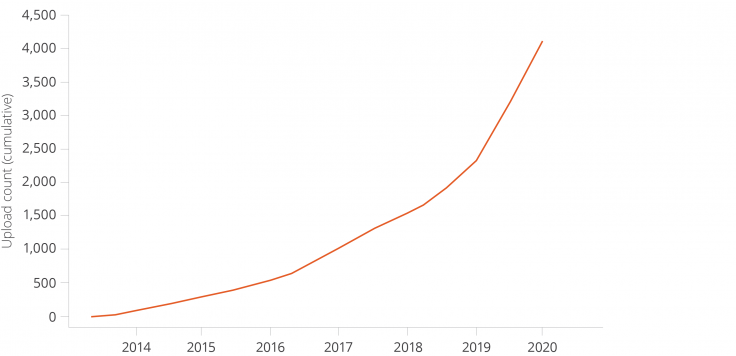

In 2013, J-PAL began a collaboration with the American Economic Association (AEA) to create the AEA’s Randomized Controlled Trial (RCT) Registry. The registry makes public all registered randomized trials in the social sciences. Since its inception, the registry has grown to include over 4,000 randomized evaluations from over 150 countries. Now, users can browse registry metadata with ease using monthly data snapshots uploaded to the Harvard Dataverse by J-PAL. These snapshot datasets let users access registry metadata (such as the primary outcome, randomization method, sample size, etc.), cite to a permanent link, and analyze how researchers use the registry itself.

What information do the Registry’s monthly uploads to the Dataverse include?

The snapshot dataset comprises every trial up until the first Monday of the current month. The metadata in the dataset includes all the public fields available on the registry and can be sorted by unique identifiers such as study title, URL, RCT ID, and Direct Object Identifier (DOI) number. The Primary Investigator and other Primary Investigators are listed for each trial. Additionally, there are fields related to studies’ experimental design such as the primary outcome, randomization method and unit, and sample size. It is also possible to see which trials uploaded files such as IRB approval, pre-analysis plans (PAPs), and post-trial documents such as published papers.

| Total numbers (as of December 2, 2020) | |

|---|---|

| 4,155 studies across 153 countries |

5,739 researcher accounts |

| 1,711 pre-registered studies (registered before the intervention start date) |

1,521 studies contain pre-analysis plans (PAPs) |

| 7,500+ monthly web views |

204 metadata downloads since February 2020 |

What can the Registry metadata be used for?

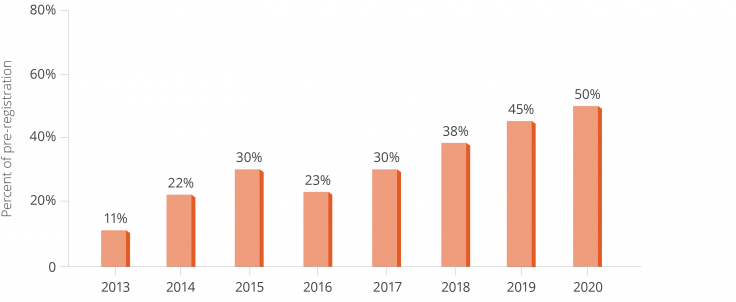

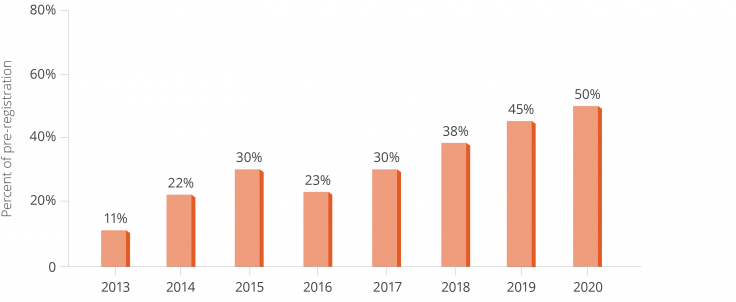

Making public the AEA RCT Registry’s metadata can foster greater transparency in the research community. The metadata describes the makeup of the registry and the randomized social science trials that are in development, active, completed, and withdrawn. Though registration is still relatively new in the social sciences, the registry metadata has the potential to help reveal the extent of the file drawer problem and publication bias, by making available data on initiated studies and not only published studies. To make the findings from the metadata easily accessible and citable, researchers can use the Dataverse dataset and DOI for analysis and citation purposes.

The metadata can be used in a variety of ways. For example, researchers can view information on the percentage of registrations that contain pre-analysis plans or have entered post-trial information, such as their trial status (e.g., completed, withdrawn, etc.) or uploaded publications. Downloaders interested in particular keywords, locations, or sectors (e.g., agriculture, gender) can search the dataset using these terms, facilitating meta-analysis of studies. Or researchers can analyze how studies have evolved over time and vary across location (e.g., the number or types of trials).

J-PAL encourages researchers to use the metadata on the Dataverse for research and to learn more about trial registration, the Registry’s contents, and randomized evaluations in the social sciences as a whole.

Ready to check it out? Visit the AEA RCT Registry’s Dataverse. For questions or help, please reach out to [email protected].

To learn more about J-PAL’s research transparency efforts, read about our core activities.

When a study is not published in an academic journal, how can we ensure its results, even null results, are still reported—instead of remaining inaccessible in a (metaphorical) file drawer?

... and other topics discussed at the annual meeting of the Berkeley Initiative for Transparency in the Social Sciences

Last month we presented at an event hosted by the Berkeley Initiative for Transparency in the Social Sciences (BITSS) on the "file drawer problem": when a study is not published in an academic journal, how can we ensure its results, even null results, are still reported—instead of remaining inaccessible in a (metaphorical) file drawer?

The event, a one-day workshop followed by BITSS' one-day annual meeting, brought together an interdisciplinary group of researchers, funders, journal editors, design specialists, and research administrators to discuss integrated approaches for improving the tracking of funded research outputs, with special consideration for projects that yield null results.

The workshop, “Unlocking the File Drawer,” focused on post-trial reporting of results in social science research; we discussed ideas for making it easier for researchers to report the results of their studies. At the annual meeting the next day, participants presented on new research on issues in research transparency, some of which was funded through BITSS' SSMART grant program.

Some of the ideas that emerged over the course of the two-day event included:

A template for null results reporting

Scott Williamson, a graduate student at Stanford, presented his work with co-authors at the Immigration Policy Lab (IPL) on a proposal of a null results reporting template. The template is designed to make it easier for researchers to think about how they can publish their results in formats other than peer-reviewed journals (which are unlikely to accept papers reporting null results).

The template would be approximately five pages in length (i.e., short), with a clear outline and structure, and including several components taken from pre-analysis plans or already completed analysis (reducing the need for new work).

Progress on post-trial reporting in registries

Nici Pfeiffer from the Center for Open Science, which hosts the Open Science Framework, mentioned that they have a process for sending reminder "nudges" to researchers to update their pre-registration with results.

Merith Basey of Universities Allied for Essential Medicines talked about their joint work with TranspariMed in relation to FDAAA 2007, a law that requires certain clinical trials to report results within twelve months of completing the study. They've released results of their own research on US universities' compliance with clinical trial transparency regulations (non-compliant clinical trials can technically be fined $10,000 per day for violating the regulations).

Cecilia Mo of UC Berkeley brought up whether a successful replication should also be considered a null finding since they are underreported, and they get put into the file drawer.

I (James Turitto) provided updates on the American Economic Association’s RCT registry, which is managed at J-PAL, and reported on changes made to the registry over the past few years (like the introduction of a registry review system and Digital Object Identifiers) and the current status of post-trial results reporting by researchers.

Jessaca Spybrook of Western Michigan University presented on REES: The Registry of Efficacy and Effectiveness Studies. It’s an education registry plus pre-analysis plan repository, and systematic categorization distinguishes this registry from others. (See slides from her presentation.)

Journal updates on steps to improve research transparency

Andrew Foster of Brown University, Editor-in-Chief of the Journal of Development Economics, provided an update on the pre-results review pilot at the Journal of Development Economics. So far, there have been 85 pre-results review submissions to the JDE, and the project has been largely successful.

Lars Vilhuber of Cornell University, American Economic Association Data Editor, presented on the AEA’s new data publication policy.

New in open access and open data

Elizabeth Marincola of the African Academy of Sciences (AAS) spoke about AAS’s innovative open access publishing platform, AAS Open Research. Open Research allows African scientists to publish their research quickly on a fully accessible, peer reviewed platform. Types of research that is (or will be) published on this platform include all fields of science, traditional research articles, systematic reviews, research protocols, replication and confirmatory studies, data notes, negative or null findings, and case reports. (See slides from her presentation.)

Daniella Lowenberg and John Chodacki recently published a book, Open Data Metrics, which proposes a path forward for the development of open data metrics by discussing data citations and how to credit researchers for the data they produce.

Assessing the effectiveness of pre-analysis plans

Daniel Posner of UCLA presented on Pre-Analysis Plans: A Stocktaking, joint work with George K. Ofosu, which analyzes a representative sample of 195 PAPs from the AEA and EGAP registration platforms to assess whether PAPs are sufficiently clear, precise, and comprehensive to be able to achieve their objectives of preventing “fishing” and reducing the scope for post-hoc adjustment of research hypotheses.

Recorded presentations for both days can be found here: Day 1: Unlocking the File Drawer, Day 2: Annual Meeting.

About BITSS and J-PAL transparency work

BITSS aims to enhance the research practices across various disciplines in the social sciences (including economics, psychology, and political science) in ways that promote transparency, reproducibility, and openness. J-PAL works closely with BITSS and has sought to be a leader in making research more transparent for over a decade by developing a registry for randomized evaluations and publishing data from research studies conducted by our network of 194 affiliated professors at universities around the world. To learn more about J-PAL’s research transparency efforts, read about our core activities.

In 2013, J-PAL began a collaboration with the American Economic Association to create the AEA’s RCT Registry. Now, users can browse registry metadata with ease using monthly data snapshots uploaded to the Harvard Dataverse by J-PAL. These snapshot datasets let users access registry metadata, cite to a permanent link, and analyze how researchers use the registry itself.

In 2013, J-PAL began a collaboration with the American Economic Association (AEA) to create the AEA’s Randomized Controlled Trial (RCT) Registry. The registry makes public all registered randomized trials in the social sciences. Since its inception, the registry has grown to include over 4,000 randomized evaluations from over 150 countries. Now, users can browse registry metadata with ease using monthly data snapshots uploaded to the Harvard Dataverse by J-PAL. These snapshot datasets let users access registry metadata (such as the primary outcome, randomization method, sample size, etc.), cite to a permanent link, and analyze how researchers use the registry itself.

What information do the Registry’s monthly uploads to the Dataverse include?

The snapshot dataset comprises every trial up until the first Monday of the current month. The metadata in the dataset includes all the public fields available on the registry and can be sorted by unique identifiers such as study title, URL, RCT ID, and Direct Object Identifier (DOI) number. The Primary Investigator and other Primary Investigators are listed for each trial. Additionally, there are fields related to studies’ experimental design such as the primary outcome, randomization method and unit, and sample size. It is also possible to see which trials uploaded files such as IRB approval, pre-analysis plans (PAPs), and post-trial documents such as published papers.

| Total numbers (as of December 2, 2020) | |

|---|---|

| 4,155 studies across 153 countries |

5,739 researcher accounts |

| 1,711 pre-registered studies (registered before the intervention start date) |

1,521 studies contain pre-analysis plans (PAPs) |

| 7,500+ monthly web views |

204 metadata downloads since February 2020 |

What can the Registry metadata be used for?

Making public the AEA RCT Registry’s metadata can foster greater transparency in the research community. The metadata describes the makeup of the registry and the randomized social science trials that are in development, active, completed, and withdrawn. Though registration is still relatively new in the social sciences, the registry metadata has the potential to help reveal the extent of the file drawer problem and publication bias, by making available data on initiated studies and not only published studies. To make the findings from the metadata easily accessible and citable, researchers can use the Dataverse dataset and DOI for analysis and citation purposes.

The metadata can be used in a variety of ways. For example, researchers can view information on the percentage of registrations that contain pre-analysis plans or have entered post-trial information, such as their trial status (e.g., completed, withdrawn, etc.) or uploaded publications. Downloaders interested in particular keywords, locations, or sectors (e.g., agriculture, gender) can search the dataset using these terms, facilitating meta-analysis of studies. Or researchers can analyze how studies have evolved over time and vary across location (e.g., the number or types of trials).

J-PAL encourages researchers to use the metadata on the Dataverse for research and to learn more about trial registration, the Registry’s contents, and randomized evaluations in the social sciences as a whole.

Ready to check it out? Visit the AEA RCT Registry’s Dataverse. For questions or help, please reach out to [email protected].

To learn more about J-PAL’s research transparency efforts, read about our core activities.

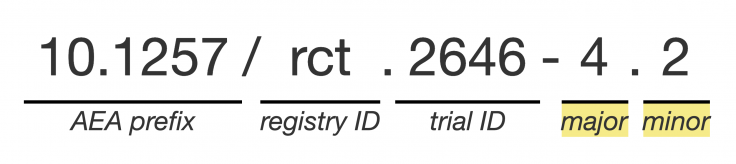

J-PAL and the American Economic Association (AEA) have been working together over the past year to create digital object identifiers, or DOIs, for each trial registered in the AEA Registry for Randomized Controlled Trials.

We are happy to announce that as of August 13, 2019, the Registry has officially launched this new feature! DOIs provide persistent links to web content to ensure that the content is discoverable at all times—even if its URL or location within a site changes.

Using DOIs for trial registration in the social sciences is still something new, and we see it as an important move forward for research transparency. DOIs offer persistence and permanence that standard URLs do not, and are widely used across academia to provide permanent links to published journal articles as well as data (e.g., the new AEA Data and Code Repository at openICPSR).

This will make it easier for researchers to link their study designs and their (optional) pre-analysis plans directly to their publications and published research data. This also ensures that research study designs that have been registered will remain findable in perpetuity.

What exactly is a digital object identifier?

It is a string of numbers, letters, and symbols used to permanently identify an article or document and link to it on the web.

Using a standard URL to cite or share a document can be risky. Technological updates, or organizational changes, may change URLs. When assigning DOIs, organizations like the AEA insulate researchers and publications from such changes. Because DOIs are permanent, they allow users to consistently cite other people's work without the risk of broken links.

Furthermore, the underlying registry monitors citations and records when a DOI is used as part of a reference list, regardless of which journal ultimately publishes the citing article. It thus becomes easier for researchers and organizations to document the impact of their work.

As a member of Crossref, an official DOI Registration Agency of the International DOI Foundation, the AEA has a DOI prefix unique to the organization and has provided the AEA RCT Registry a set of suffix numbers that include “RCT” in the DOI handle, along with the same ID number as the registration.

We encourage all registry users to cite the registry DOI in their publications to increase visibility and permanence of their research. For more information, see the FAQ page of the AEA RCT Registry.

Adding DOIs to auto-generated registry citations will make trial registration entries not only more permanent, but also more searchable and accessible. Learn more about J-PAL’s research transparency efforts.