Strengthening capacity for evidence generation: J-PAL’s evaluation incubators

This is the fourth blog in a series illustrating stories of how J-PAL’s training courses have built new policy and research partnerships and strengthened existing ones to advance evidence-informed decision-making. The first blog in the series highlights two meaningful examples from J-PAL’s Evaluating Social Programs course participants, the second showcases how custom courses strengthened government partnerships, and the third illustrates how online course participants applied learnings at their organizations.

J-PAL’s evaluation incubators use different combinations of training, resources, and technical support for varying lengths of time to guide participants through designing and implementing a randomized evaluation of their own program. Training lays the foundation for the incubators: participants learn the nuts and bolts of how to conduct a rigorous impact evaluation and are then able to better engage with other incubator elements, leading to more fruitful research collaborations.

To support evaluations with high potential for impact, our incubators usually focus on a particular region or sector and are often linked to specific funding opportunities or initiatives. This year, J-PAL held four incubators across three regions focusing on a range of issues, from expanding labor market opportunities in Brazil to Covid-19 recovery in the United States. Incubator participants typically apply with a project and research idea already in mind, such as a group of officials from Pierce County, Washington looking to assess the impact of a multifaceted eviction prevention program.

Coupled with technical support from J-PAL staff on project and proposal development, participants use their training knowledge to actively engage in planning a randomized evaluation for their target program. Highly promising projects, in which a randomized evaluation may be feasible to implement, often go on to be matched with J-PAL affiliated researchers to carry out the study together. This blog showcases three examples of how training activities within J-PAL incubators helped partners better engage with the research design process and generate evidence to guide program decision-making.

Expanding research avenues to prevent gender-based violence in Peru

Photo credit: IPA/J-PAL

J-PAL Latin America and the Caribbean (LAC) has strengthened partnerships with local organizations through incubator courses, building long-term relationships to inform critical policy issues. One example is an ongoing collaboration with Peru’s Ministry of Women and Vulnerable Populations (MIMP) and Innovations for Poverty Action (IPA) which began in 2016 to identify the best strategies to confront gender-based violence (GBV) through scientific evidence.

As a first step, J-PAL LAC and IPA hosted a two-day incubator workshop at the Ministry headquarters in Lima. Approximately 36 government officials from key areas across the Ministry participated in the sessions, with MIMP's senior management, including the former Vice-Minister of Women Ana María Mendieta, committed to the workshop's success. Facilitated by IPA and J-PAL LAC staff, the workshop provided theoretical and practical sessions to enhance participants’ skills in evidence use and generation. It also paved the way to identifying projects with high potential for impact in preventing violence against women that could be rigorously evaluated.

Since the workshop, the Ministry, IPA, J-PAL, and several J-PAL affiliated researchers with a focus on gender in the region, including Jorge Agüero and Erica Field, have joined forces to evaluate and improve the design of MIMP’s GBV prevention programs. Currently, four impact evaluations are taking place to develop a learning cycle in Peru, including an evaluation of an innovative program to help men regulate their emotions and reduce the perpetration of intimate partner violence.

Connecting research and implementation for collaborations in Europe

Photo credit: Elyssa Majed, J-PAL Europe

J-PAL Europe has organized several incubators in recent years to advance research in specific areas, like promoting social inclusion in Europe, and to equip partners with the tools to explore collaborations on randomized evaluations. Incubators have also served to strengthen partnerships with various development agencies, such as an online incubator with the German Development Agency which included designing an impact evaluation across five project teams in East and West Africa. J-PAL Europe also collaborated with the French Fund for Innovation in Development (FID) to add an incubator module to this year’s Summer School on Development Methodologies targeting FID projects interested in launching their own impact evaluations.

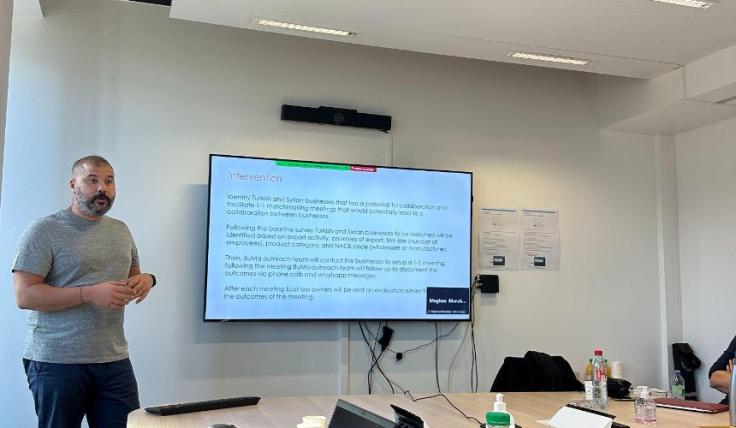

Most recently, the Displaced Livelihoods Initiative (DLI) hosted its first in-person incubator in October 2023 focused on impact evaluations in forced displacement settings. The three-day workshop combined lectures on the fundamentals of impact evaluation and group work sessions where participants could apply the knowledge acquired to their individual projects. One of the participating organizations, Building Markets, an NGO that supports refugee-led businesses in Turkey, is partnering with a research team, including a J-PAL affiliated researcher, to better understand the impact of their programming and offers a compelling example of what the incubator hopes to achieve. The NGO took part in the incubator to better understand and refine the design of its impact evaluation, including their theory of change and outcome indicators related to social cohesion between refugees and host communities.

"Joining this incubator enabled my ideas to take root and flourish, nurtured by the collective brilliance of curious minds. It allowed me to connect the dots between research and implementation, and was useful to deepen my understanding of research concepts."

Bora Arican, Turkey Country Director of Building Markets

Leveling up engagement to promote wildfire defensive practices in the United States

Photo credit: Jackson County Fire District 3

J-PAL North America runs evaluation incubators through multiple initiatives, focusing on topics such as housing stability and state and local government partnerships. In 2021, Bob Horton, former Chief Executive of Jackson County Fire District 3 in Oregon state and current Deputy Director of the Western Fire Chiefs Association, applied to be an incubator partner through the State and Local Innovation Initiative (SLI) to receive six months of individualized technical assistance support, flexible funding, and training opportunities. Oregon has been facing increasingly destructive wildfires, which disproportionately impact low-income residents, and Bob was motivated to evaluate different strategies to encourage resident uptake of “defensible space” strategies that help create a buffer between homes and wildfires, like clearing out flammable vegetation near the home. He wanted to make sure the district’s scarce government funding was going to programs that worked but felt that his team did not have the administrative capacity or specialized knowledge to explore rigorous evaluation strategies without additional support.

In addition to meeting regularly with J-PAL North America staff to design a randomized evaluation, Bob attended J-PAL’s Evaluating Social Programs course as a key training component of the incubator to build knowledge and skills in rigorous evaluation. Bob shared that attending the course helped him take his learnings from technical assistance to a new level. Through the incubator, the team refined their research question, identified opportunities for randomization, explored existing evidence on wildfire risk mitigation strategies, and connected with researchers in J-PAL’s network. The incubator project grew into a funded pilot matched with two J-PAL affiliated researchers who are now working with the Jackson County Fire Department and Western Fire Chiefs Association to test interventions to increase adoption of defensible space.

"The training gave me a framework and process to apply evaluation principles to our incubator project and take a closer look at the other things we were spending money on."

Bob Horton, former Chief Executive of Jackson County Fire District 3 and current Deputy Director of the Western Fire Chiefs Association

A holistic approach for project incubation

What sets incubator courses apart from other J-PAL trainings are the integrated activities that work in tandem to strengthen organizational capacities for evidence generation to inform decision-making. Training helps incubator participants build their impact evaluation toolkit and engage more deeply with the technical support they receive from J-PAL staff and researchers to design and implement rigorous evaluations.

If you are interested in learning more about our evaluation incubators, please contact one of our regional office teams. For incubators in Latin America and the Caribbean, contact our LAC office at [email protected] and visit our website in Spanish. For information on the DLI Incubator in Europe, contact our initiative team at [email protected]. For more information on the LEVER Evaluation Incubator in North America, contact the SLI team at [email protected].

In this blog, we highlight two notable examples of how Evaluating Social Programs courses led to high-impact research and policy partnerships, while recognizing the impressive community of practice among course alumni around the world.

This is the first blog in a series illustrating stories of how J-PAL’s training courses have built new policy and research partnerships and strengthened existing ones to advance evidence-informed decision-making. The second showcases how custom courses strengthened government partnerships, the third illustrates how online course participants applied learnings at their organizations, and the fourth shares how training activities within J-PAL's evaluation incubators helped partners engage more deeply with research design.

Since establishing our training group in 2005, J-PAL has delivered hundreds of in-person and online courses to train over 20,000 people around the world on how to generate and use evidence from randomized evaluations. From integrating existing evidence and data into decisions to building research partnerships to conduct impact evaluations, many of our training participants apply learnings to strengthen the culture of evidence use in their organizations.

Growing a community of practice for Evaluating Social Programs

Our flagship course, Evaluating Social Programs, is designed for policymakers, practitioners, and researchers interested in learning how randomized evaluations can help determine whether their programs are achieving their intended impact. Offered in several locations around the world each year, the week-long course combines interactive lectures, real-world case studies, and small group sessions to integrate key concepts with practical applications. Participants build connections with peers, academic researchers, and policy experts and come away from the course with the resources and tools to map out an evaluation strategy for their own programs and policies.

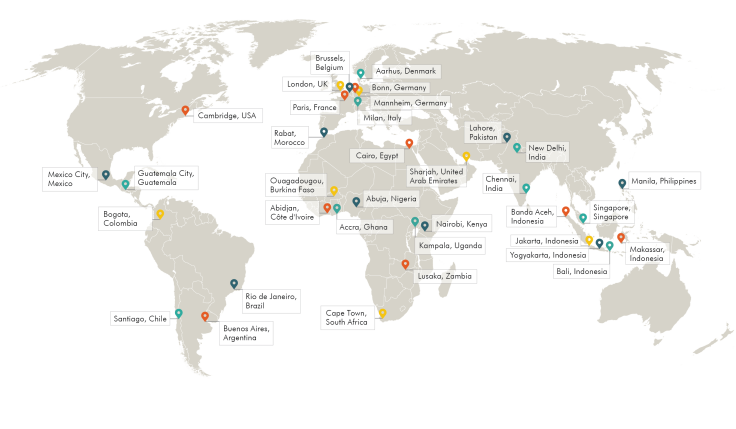

In this blog, we highlight two notable examples of how Evaluating Social Programs courses led to high-impact research and policy partnerships, while recognizing the impressive community of practice among course alumni around the world. First delivered in Cambridge, USA, and Chennai, India in 2005, the course has trained over 2,750 participants through more than 75 offerings in locations ranging from Argentina to Zambia, as well as live Zoom offerings in response to Covid-19. We also have self-paced, online versions of the course in several languages to expand the reach of the course content to learners worldwide.

Forging a partnership for evaluating biometric enrollment in Liberia

J-PAL’s Digital Identification and Finance Initiative (DigiFI Africa) built a partnership with Liberia’s Ministry of Gender, Children, and Social Protection through J-PAL Africa’s virtual Evaluating Social Programs course in August 2021 when Shadrach Saizia Gbokie, a program manager for Liberia's Social Safety Net Project, attended the training and engaged in group work sessions led by a policy manager from DigiFI. After the training, members of our team and the Ministry discussed their work and their interest and capacity to conduct research.

During these conversations, DigiFI connected with the National Coordinator for Social Protection at the Ministry, Aurelius Butler. Since then, DigiFI has worked closely with Aurelius and the Ministry to develop a new randomized evaluation to help answer their questions around how to enroll urban communities in their new household registry using biometric data. Researchers Erika Deserranno, Andrea Guariso, and Andreas Stegmann were matched with this opportunity, and Aurelius and Dackermue Dolo from the Ministry later attended J-PAL Africa’s 2022 Evaluating Social Programs course to dive deeper into the research methodology. One of the questions the evaluation will try to answer is whether biometric identification systems help reduce barriers to accessing social programs and achieve accurate program targeting and efficiency.

The course on Evaluating Social Programs truly presented a space for the Ministry to fully engage with research and understand what we could achieve for the betterment of our citizens. By providing a comprehensive deep dive into research methodology we are now able to better articulate and refine our objectives. The course truly made an immediate impact in our work, as we have already begun using 90% of what we took from the course.

-Aurelius Butler, National Coordinator for Social Protection, Ministry of Gender, Children, and Social Protection, Liberia

Sparking an education technology evaluation in India

An Evaluating Social Programs training in New Delhi in 2015 was instrumental to seeding an evaluation of Mindspark, a personalized adaptive learning platform of the Indian ed tech company, Educational Initiatives (Ei). At the time of the training, Pranav Kothari was Vice President at Ei and was overseeing the development of the Mindspark product as well as deploying the product in Mindspark centers, which sought to bring a Hindi version of the tool to low-income neighborhoods in urban Delhi. He applied to J-PAL South Asia’s training, hosted in partnership with CLEAR South Asia, with a specific goal—to be sure that every child in a Mindspark center is learning—and was keen to undertake a systematic assessment of the program and its implementation to understand whether or not it worked as intended.

The course’s focus on both the theory and practice enabled Pranav to take the first step in designing a potential evaluation of the Mindspark center program. During the training’s small group work sessions, he worked closely with Maya Escueta, former Policy and Training Manager at J-PAL South Asia, to develop a preliminary evaluation plan. Seeing the potential in the program and its evaluation, Maya visited the Mindspark centers, and eventually brought on J-PAL affiliated researchers Karthik Muralidharan, Abhijeet Singh, and Alejandro Ganimian to conduct a full-scale randomized evaluation. Run in close collaboration with Pranav and the Ei team, the evaluation demonstrated that the program increased learning levels across all groups of students. The study has since become one of J-PAL’s landmark studies in education and offers valuable evidence on the use of computer-adapted learning in low-resource settings.

While we had been working on the ground for over two years, we wanted a third-party evaluation to determine whether our intervention was useful or not. This course helped us plan and conduct an RCT, allowing us to identify how well our program worked and where we could refine it. These results have led to newer versions of the program, and have helped us scale Mindspark to reach over 300,000 students, or about 500 times the number of students that were initially studying in the centers. I have also learned many skills which helped Ei in doing impact evaluations of other educational interventions.

-Pranav Kothari, Chief Executive Officer, Educational Initiatives

Training to strengthen evidence-informed decision-making

J-PAL’s Evaluating Social Programs course equips program implementers, policymakers, and researchers with the tools to understand and engage with randomized evaluations to become better producers and users of evidence. This blog shines a light on just a few examples of how past participants through the years have integrated learnings at their organizations to advance evidence-informed decision-making.

Interested in learning how to strengthen impact evaluation at your organization? Explore and apply to one of J-PAL’s upcoming Evaluating Social Programs courses.

This is the second blog in a series illustrating stories of how J-PAL’s training courses have built new policy and research partnerships and strengthened existing ones to advance evidence-informed decision-making.

This is the second blog in a series illustrating stories of how J-PAL’s training courses have built new policy and research partnerships and strengthened existing ones to advance evidence-informed decision-making. The first blog in the series highlights two meaningful examples from J-PAL’s Evaluating Social Programs course participants, the third illustrates how online course participants applied learnings at their organizations, and the fourth shares how training activities within J-PAL's evaluation incubators helped partners engage more deeply with research design.

Through our custom courses, J-PAL works in close partnership with organizations to tailor our training materials on using and producing rigorous evidence to meet specific interests and learning goals. This can include customizing content to a particular sector, geographic area, or level of technical detail, such as a workshop on strategies to measure gender outcomes for practitioners working on gender-related programs in South Asia. These tailored offerings enable participants to engage with examples that resonate with their experiences and equip them to apply learnings to their work.

Many of our custom course partners include government ministries, non-governmental organizations, and multilateral organizations that hope to establish evaluation strategies for the social programs they implement. Custom courses can offer both theoretical and practical guidance to organizations through critical stages of their programs’ evaluation cycles, from developing a theory of change and measurement strategy to using evidence in program decision-making.

This blog showcases two examples of how custom courses contributed to meaningful research and policy partnerships by building evaluation skills. These represent two out of the hundreds of custom courses J-PAL has held for thousands of participants around the world: with courses delivered in Arabic, English, French, Indonesian, Portuguese, and Spanish, J-PAL’s past custom courses span across forty countries, from Argentina to Vietnam.

Building data collection and analysis skills for implementation monitoring in Indonesia

Since 2021, J-PAL Southeast Asia has worked closely with the Center of Education Standards and Policy (PSKP) within the Ministry of Education, Culture, Research, and Technology of Indonesia to evaluate the impact of their Empowering Schools Program on learning outcomes. This program encompasses an integrated set of interventions to transform teaching and school management practices. Through this collaboration, J-PAL Southeast Asia learned about the PSKP’s ongoing process monitoring activities. Drawing on J-PAL’s experience collecting and analyzing quantitative data, J-PAL Southeast Asia partnered with the PSKP to develop a custom training series to share best practices and support the PSKP in conducting a survey to monitor the nationwide implementation of the Empowering Schools Program.

The training series, delivered throughout 2022 and 2023, provided an overview of basic monitoring and evaluation concepts, including survey development, sampling and sample size, and data management and analysis. In addition to in-class training, J-PAL research staff served as discussion partners to provide examples and inputs based on real cases faced by the PSKP. For example, in meetings with the PSKP on their survey questions and sampling, M. Thoriq Akbar, Senior Research Associate at J-PAL Southeast Asia, offered practical guidance on how to draw samples based on the PSKP’s list of participating schools and how to rephrase ambiguous survey questions.

When evaluating the Empowering Schools Program, quantitative data management and analysis is a crucial component to monitor the process and measure the impact. This training provides an alternative tool for us to manage and analyze our data.

- Bakti Utama, training participant from the PSKP, Ministry of Education, Culture, Research, and Technology

Deepening a partnership for evaluating early childhood programs in Egypt

In collaboration with the Egyptian Ministry of Social Solidarity (MoSS), J-PAL Middle East and North Africa (MENA) co-designed a randomized evaluation to test the impact of providing childcare subsidies and employment services on women’s employment and empowerment outcomes, as well as children’s cognitive and socioemotional skills. To ensure the success of this collaboration and strengthen capacities for producing and using evidence, MoSS requested a tailored training for monitoring and evaluation specialists and implementers of the program.

J-PAL MENA organized a two-day training workshop for Ministry staff in January 2022 to provide foundational knowledge on randomized evaluations tailored to the Egyptian context. After covering considerations for why, when, and how to conduct a randomized evaluation through lectures and interactive case studies, the final session offered a deep-dive into the ongoing evaluation of the subsidized access to nurseries program. This created an opportunity for participants to learn more about research design and implementation decisions, contribute new perspectives, and clarify important details of the study. Participants shared positive feedback on the workshop and came away with a deeper understanding of how the evaluation will lead to actionable insights for the program and their work.

The training proved to be highly beneficial for the Ministry staff as it provided them with a comprehensive understanding of how randomized evaluations can effectively evaluate national programs. The staff also acquired practical skills that would enable them to support the current study co-designed with J-PAL MENA. Based on these positive outcomes, we believe that randomized evaluations can serve as a valuable tool to evaluate other early childhood programs in the country.

- Mohsen Nagy, Early Childhood Development National Program Manager, MoSS, and training participant

Strengthening partnerships through custom course collaborations

Through customized training modules and discussions, J-PAL training teams are able to share relevant experiences and provide timely inputs to support partners’ objectives, forging relationships for evidence use beyond a single randomized evaluation. Custom courses support partners in pursuing rigorous monitoring and evaluation, which strengthens the culture of evidence-informed decision-making in their institutions.

Interested in learning how J-PAL can design a custom course for your organization? Visit our custom courses page to learn more and get in touch with our team.

This is the third blog in a series illustrating stories of how J-PAL’s training courses have built new policy and research partnerships and strengthened existing ones to advance evidence-informed decision-making. The first blog in the series highlights two meaningful examples from J-PAL’s Evaluating Social Programs course participants, and the second blog showcases how custom courses strengthened government partnerships in Indonesia and Egypt.

This is the third blog in a series illustrating stories of how J-PAL’s training courses have built new policy and research partnerships and strengthened existing ones to advance evidence-informed decision-making. The first blog in the series highlights two meaningful examples from J-PAL’s Evaluating Social Programs course participants, the second blog showcases how custom courses strengthened government partnerships in Indonesia and Egypt, and the fourth shares how training activities within J-PAL's evaluation incubators helped partners engage more deeply with research design.

J-PAL’s online courses expand the reach of our impact evaluation training materials to audiences worldwide and enable participants to access content on their own schedule. These offerings include Evaluating Social Programs online, which mirrors the content in our flagship in-person training. Through a series of integrated, interactive modules, the course provides participants with a thorough understanding of randomized evaluations and how they are designed in real-world settings. Participants dive into lectures by J-PAL affiliated professors and senior staff, case studies based on research projects from the J-PAL network, and opportunities to share ideas and collaborate via the course discussion forum.

J-PAL currently offers the online version of our Evaluating Social Programs course in English, Spanish, and Portuguese, with plans to add content in French in the future. Each course is free to access, with the option to earn a certificate of completion for a small fee, open to anyone, and typically runs for several months. Since the first of these online courses launched in 2014, each year we incorporate improved and updated course content to reflect developments in the field and participant feedback on what is most useful in their work.

These courses have reached tens of thousands of learners from 181 countries around the world since their launch. Over 11,000 participants have earned certificates of completion, and the worldwide course community continues to grow. This blog post zooms in on three examples of how these online courses helped participants around the world strengthen the culture of evidence use in their organizations and inform real-world evaluations.

Applying lessons to education program evaluation in the Philippines

Juvhan Rebangcos enrolled in the Evaluating Social Programs online course in 2022 to build a monitoring and evaluation community of practice at Teach for the Philippines (TFP), where he is a data and impact assessment manager supporting the organization’s mission to ensure all Filipino children benefit from high-quality education. He noted that learning from the course equipped him “with the know-how of estimating social impact, which is critical in measuring and validating student outcomes,” and that the real-world best practices shared throughout the course are especially applicable to his and his colleagues’ work.

TFP conducted a small pilot study to test the organization’s online, modular remedial literacy and numeracy program during the Covid-19 pandemic. After Juvhan completed Evaluating Social Programs online, he used what he learned in the course to refine the evaluation design, address challenges such as attrition and low attendance, and expand the sample size for TFP’s next evaluation, testing the impact of implementing the same program with face-to-face learning. He recalled that referring back to the course was his compass when managing the study earlier this year and also provided new ideas for future evaluations of TFP’s impact.

He says, “Moving forward, we know that randomized evaluations will be a go-to option whenever we are testing a pilot, pursuing program innovations, or validating outcomes. Aside from the results we could use down the line, we can learn so much from our experience conducting them.” Juvhan plans to continue to apply lessons from the course to strengthen the culture of evidence use in his organization.

“The course prepared me for designing experimental setups, handling potential research threats, and navigating the ethics of our research activities. Most importantly, my course experience aided me in crafting data-informed, evidence-based, and compelling stories to demonstrate the eventual impact of our work as an organization.”

- Juvhan Rebangcos, Data and Impact Assessment Manager, Teach for the Philippines

Democratizing knowledge to inform decision making across Latin America

J-PAL’s Latin America and the Caribbean office (J-PAL LAC) offers two online courses in Spanish and Portuguese to democratize access for learners in the region. Evaluación de Impacto de Programas Sociales, in Spanish, is led by J-PAL LAC scientific director Francisco Gallego. Avaliação de Impacto de Programas e Políticas Sociais is offered in Portuguese, in partnership with the Brazilian National School of Public Administration. J-PAL LAC’s online courses fortify training for Spanish- and Portuguese-speaking partners, helping participants to engage with evidence for impactful policy change and fostering a culture of evidence and evaluation. Past participants highlighted the practical value of the material for designing, implementing, and evaluating programs in the region, as well as the courses’ effective combination of theory and practice. The courses also serve as an introduction to J-PAL’s work and a starting point for further partnerships.

Virgilio Pires, a labor inspector at the Brazilian Ministry of Labor, connected with J-PAL to explore evaluation opportunities after completing the course in 2021. This led to partnering with J-PAL affiliated researchers Jeanne LaFortune and José Tessada on a randomized evaluation measuring the impact of worker safety interventions, with support from J-PAL LAC’s Jobs and Opportunity Initiative Brazil. The evaluation will study the effect of virtual safety training and in-person safety inspections on worker well-being in the Brazilian manufacturing sector, as well as investigate the cost-effectiveness of traditional in-person visits compared to less expensive virtual training.

"The course was crucial in sparking my interest in public policy evaluation. From this course, I realized that it is possible to conduct an impact evaluation of projects in labor inspection… Thus, our proposal emerged to conduct an evaluation focused on preventing work-related accidents and diseases. Beyond providing knowledge on [impact evaluation], the course also presented J-PAL's work, which I was previously unaware of. We were fortunate enough to present the project to J-PAL, and [J-PAL affiliated researcher] Jeanne Lafortune accepted it because it aligned with research topics she was already exploring."

- Virgílio Pires, Labor Inspector for the Brazilian Ministry of Labor

Future directions: Tailoring hybrid learning for Francophone training partnerships

In 2022, J-PAL Europe developed a French-language version of the Evaluating Social Programs online course in partnership with the Morocco Employment Lab for a hybrid training with government partners in Morocco. Course development was spearheaded by J-PAL Europe's Director of Training Ilf Bencheikh, together with J-PAL affiliated researchers Elise Huillery, William Parienté and Roland Rathelot. The course, Evaluer les Programmes Sociaux, was first used in a hybrid format with the Moroccan Court of Audit in early 2023. Around thirty magistrates followed the online course modules over a period of three weeks. After completing the online course, the magistrates attended three half-day, face-to-face sessions where they explored certain aspects in more depth, interacted with the teaching team, and got answers to their questions. This partnership demonstrates the potential of tailored hybrid courses, which combine the accessibility of asynchronous learning for foundational modules with in-person content customized to a particular sector, geographic area, or level of technical detail.

While Evaluer les Programmes Sociaux is not yet available for open enrollment, the J-PAL Europe team continues to tailor and incorporate course material into training partnerships, with upcoming events planned in Cote d’Ivoire. We hope to incorporate these materials into J-PAL’s open online course offerings in the future.

Another new online course, based on Evaluating Social Programs and carefully designed for the needs of education policymakers and practitioners in France, is currently being developed by J-PAL Europe’s Innovation, Data and Experiments in Education team. The course will launch in the coming months and join J-PAL’s suite of course offerings, marking a new area of innovation in online courses customized to support impact evaluation and evidence use by region and sector—check back on our online courses page for updates once the course is available.

Join a global community of practice

Spencer Obiero, a student at Bates College from Nairobi, Kenya who completed J-PAL’s Evaluating Social Programs online course earlier this year, said that the course reminded him of a classic team of superheroes: “I like how the different professors, like the Marvel superheroes The Avengers, have been brought together to teach this course. It’s amazing!”

Online courses, like superhero movies, are all about bringing together a large cast with diverse backgrounds to tackle critical and complex problems. With Evaluating Social Programs online, J-PAL combines regional expertise with a worldwide network to support the work of policymakers, practitioners, and program implementers in understanding and measuring impact. However, the core of the J-PAL’s online courses is the dedicated learners who continually share their thoughts and experiences from different contexts, improving the courses—and each other’s learning— with their ideas.

Interested in enrolling in one of J-PAL’s online training courses? Evaluating Social Programs is open for enrollment now through December 1 on the MITx Online platform, and you can visit our online courses page for a full list of upcoming offerings. To dive even deeper into topics in economics and data analysis, consider enrolling in one of the semester-long courses in the Data, Economics, and Design of Policy MicroMasters program.