AI for Social Good

We need rigorous impact evaluations of AI in the social sector to ensure that it promotes social welfare.

This piece was originally published by The Agency Fund.

This is the first blog post in a series designed to help implementers, policymakers, and funders unpack the different types of evaluations relevant for “AI for good” applications. Stay tuned for forthcoming posts providing a deeper dive on each of the evaluation levels.

There's a common concern that AI systems are black boxes prone to unexpected behavior, from Google recommending users eat rocks to Sora’s AI videos depicting gymnasts sprouting extra limbs. While some of these examples may seem benign, organizations using AI for development are rightly concerned about more serious errors and biases, especially when using AI in high-stakes situations. Tech companies argue rapid advancements and mitigation tools will reduce unintended behavior, but how do we know if these guardrails work? Even if “AI for good” products behave as intended, when should we invest in measuring their impact on outcomes like literacy or infant mortality?

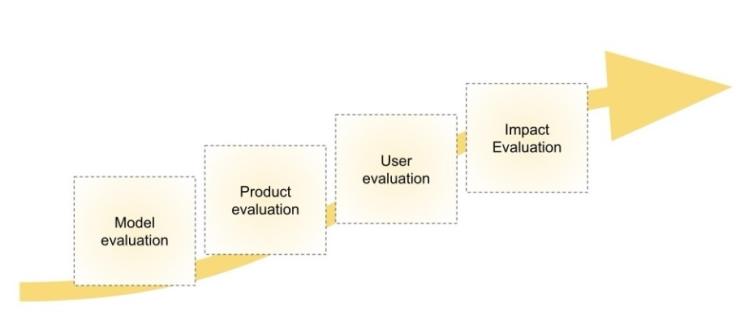

Evaluations of AI applications can help address these challenges, but the term “evaluation” is often used loosely within AI contexts, making it difficult to determine what specific questions should be asked at each stage of application development and implementation. For funders and organizations applying AI to social impact, we propose an evaluation framework consisting of four incremental levels, each with broadening scope. This blog series guides implementers on the appropriate evaluation methods and metrics for each level of a project and recommends that funders match evaluation requirements to the product’s development stage, avoiding premature requests for impact evaluations. Initially developed by the Agency Fund, this framework is undergoing review—we expect further iteration and invite reader feedback.

AI applications for development interventions must reliably perform multiple tasks—for instance, an AI helpline for pregnant women must distinguish medical queries from small talk and provide accurate medical information. Just like human operators, these systems can be improved through training, evaluation, and iteration. The initial level of assessment is known as model evaluation, and it can help implementers and funders understand the extent to which an AI helpline sticks to its role, is technically accurate, or provides useful and empathetic advice.

Prompt: You are a medical helpline operator providing guidance to pregnant women. Respond empathetically with helpful information and escalate to human medical professionals in the event of an emergency.

| User Input | AI Response | Ideal Response (Human Generated) | Scores (out of 5) |

|---|---|---|---|

| My mother-in-law is mad at me. She tells my husband and I that we have moved too far. | This concern does not appear to be medical. Please ask a question related to your health, and I will assist. | I am sorry to hear that. While there does not appear to be an immediate medical concern, mental health and well-being are important. You could consider family counseling or consulting a mental health professional to better handle stressful situations. | Answer Relevance: 4 Empathy: 3 Completeness: 3 Overall: 67% |

| I am always hot and itchy, is this normal? | That sounds uncomfortable but is normal for many pregnant women, monitor and seek help if the condition persists. | Experiencing hot flashes and itching can be common during pregnancy, especially around the belly and breasts. Seek medical attention if itching is severe or persists. | Answer Relevance: 4 Empathy: 5 Completeness: 3 Overall: 80% |

| Headache and vomiting since yesterday morning, I can't see properly. I don't know what to do. | Headaches can be common in early pregnancy. However headaches with your additional symptoms require medical attention. Let me connect you with a trained nurse now. | While headaches can be common, a combination with vomiting and blurred vision can be a danger sign. Hold tight, I am connecting you to one of our trained nurses now. | Answer Relevance: 5 Empathy: 4 Completeness: 5 Overall: 93% |

Other examples of model evaluation metrics can be found here.

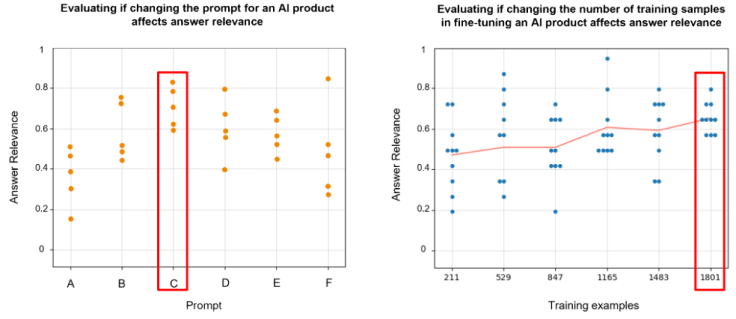

Here’s how it often works: in a model evaluation of an AI-powered helpline, evaluators begin by creating ideal responses to a dataset of typical user inquiries; these reference questions and answers are usually generated by either experts or representative users. The AI model then generates its own responses to the same inquiries. These can be assessed against the reference responses or scored for criteria like relevance, empathy, and completeness. If performance is unsatisfactory, developers can apply techniques such as prompt engineering, which involves writing clear instructions for the AI to help it produce better responses, or fine-tuning, which involves teaching an AI to perform better by giving it extra training on specific ideal examples. Each new prompt or fine-tuned model can then be retested to determine which specific adjustment yielded the most satisfactory responses.

Beyond individual tests for relevance, developers may want to know how consistent the model’s performance is over time, because generative AI models are usually not deterministic—they can produce different outputs for the same input. In addition, most commercial models are being refined constantly, so changes in performance should be expected. Even if an AI application is shown to be highly accurate in one round of evaluations, how replicable is this? Evaluations may be repeated multiple times to determine which adjustment generates not only the highest accuracy but also the lowest variance from trial to trial.

This sounds like a heavy lift, but unlike human-operated call centers—where changes such as curriculum adjustments or pay incentives require significant time to assess—AI applications allow rapid modification and evaluation cycles, improving confidence before launch. Developers should leverage this capability by frequently evaluating and refining model performance to ensure implementation fidelity. Funders should request model evaluation results before mandating impact assessments, as unreliable AI will struggle to drive development outcomes. They should also review safeguard metrics, including hallucination rates, tone alignment, irrelevant answer rates, and domain-specific unsafe responses.

Beyond evaluating how the AI model behaves, organizations need to assess how well the product engages users and whether it solves a meaningful problem for the user. It is unlikely that a product will shift development outcomes if it fails to engage its users. Technology companies frequently evaluate and improve products by collecting user interaction metrics and then running rapid cycles of digital experiments. For example, they may track a user’s journey on a website, automatically collecting records like which products the users click on and whether they return to the site. Then, they can compare how different web page layouts affect browsing time or user satisfaction.

Similarly, an organization building an AI farming coach might compare whether a chatty or concise chatbot boosts user engagement and retention. Such evaluations also apply when AI tools target front-line workers rather than end-users. For instance, one can assess whether an AI tool providing more detailed health information increases a health worker's use or reduces engagement.

In most cases, usage metrics can be tracked with simple, low-cost instrumentation embedded within the product itself. Yet affordable public-sector tools for rapid product testing are scarce. To address this gap, IDinsight has developed Experiments Engine and Agency Fund is developing Evidential, currently in beta testing. Both products do slightly different tests (e.g. multi-armed bandits and A/B testing) but enable nonprofits and government entities to integrate rapid user-engagement evaluations into their AI applications.

Such testing should precede broader impact evaluations, since lack of user engagement in a product often predicts a failure to impact downstream outcomes, like farmer income or student learning.

Once an AI product is functioning correctly (level 1) and engaging users as intended (level 2), the next step is to ask: Is this product actually changing how users think, feel, or act in ways that are in line with the product’s intended purpose? This level is essential because users’ psychological and behavioral changes often serve as early indicators of whether a product is likely to achieve its long-term development goals (e.g., improving health outcomes or educational gains). Compared to full-scale impact evaluations, these user evaluations are faster and cheaper, and they allow product developers to iterate rapidly based on real-world feedback.

At this stage, evaluations may focus on outcomes such as:

For example, when students are learning with an AI math tutor, it is wise to assess more than just their engagement with the tool. While engagement is important, it can sometimes diminish their agency, and research has shown that overreliance on AI can indeed harm learning. Therefore, it is important to examine if the AI application enhances the student’s “self-regulated learning” process: whether the student understands the task (e.g., seeing how multiplication is a relevant capability), sets clear goals (e.g., solving ten problems in one session), uses effective strategies and tracks progress (e.g., using notes, visual aids or chatbot quizzes), and reflects on their performance to improve (e.g., recognizing challenges and seeking targeted practice). Additionally, it is also valuable to assess whether the AI tutor helps students feel less stressed, more motivated, and more confident in their learning journey.

These evaluations are often led by user researchers and can draw on a combination of product usage metrics, usability test results, survey instruments, and trace data. These measures can also be complemented by an analysis of chat conversations using techniques like sentiment analysis and topic modeling. By conducting qualitative and quantitative user studies, in combination with rapid experiments (e.g., A/B tests), researchers can iteratively refine the product to improve its intended effects on users’ psychological and behavioral outcomes. For example, an AI tutor can be A/B tested against a rule-based tutor that assigns students goal-setting exercises and reflections, to assess if the AI version can improve these self-regulated behaviors. Demonstrating measurable improvements at this stage can strengthen the product’s potential for long-term impact.

Even if levels 1–3 show that the technology functions well, users are engaged, and data suggests improved knowledge or behaviors, organizations deploying AI for social good ultimately care whether their solutions improve users’ health, income, wellbeing, or other development outcomes. To assess this causal impact, evaluators must estimate the counterfactual—what would have happened to these key outcomes without the intervention. Randomized evaluations offer the most credible way to measure this, clearly attributing changes in outcomes to the intervention. Without doing these evaluations, we risk overinvestment in products that feel good, at the expense of investing those resources in products that actually do good.

We recommend partnering with academic researchers to collect comprehensive data on key outcomes and enhance study design quality and credibility, as robust evidence from such evaluations can support broader adoption and justify significant investment. For example, the state of Espírito Santo, Brazil, piloted Letrus, an AI platform providing personalized essay feedback to students, alongside a randomized evaluation by J-PAL affiliated professor Bruno Ferman, Flavio Riva, and Lycia Silva e Lima. The evaluation found that students using Letrus wrote more essays, received higher-quality feedback, engaged more individually with teachers, and scored higher on national writing tests than those who did not. Given these results, Espírito Santo expanded Letrus statewide, and the platform is now active in six additional Brazilian states.

Letrus’s effectiveness partly stems from enabling teachers to offload repetitive tasks (providing feedback on the entirety of each essay) to AI, allowing them to focus their personalized support where it would be most beneficial. Many organizations face similar constraints on scaling—any human worker’s hours are inherently limited. Integrating AI into interventions can significantly increase reach. If an AI-driven version achieves comparable or better outcomes at a substantially lower cost, organizations can dramatically enhance their programs' cost-effectiveness.

These four levels form a practical framework addressing AI developers’ and funders’ core concerns. Although incremental and iterative, the levels follow a logical sequence: first ensure basic performance and safety, then confirm user engagement with the product, followed by user assessments, and finally more comprehensive impact evaluations. Adopting this approach transforms abstract discussions about AI reliability, engagement, and impact into concrete, empirical evaluations that can inform public policy.

We need rigorous impact evaluations of AI in the social sector to ensure that it promotes social welfare.

The state government of Espírito Santo, Brazil applied research findings to scale an AI education technology that gives public school students feedback on their writing, reaching more than 100,000 high school seniors to date.

In Brazil, public school students face significant challenges in developing writing skills, partly because teachers often don’t have time to provide individualized assistance to students. There are wide achievement gaps between public and private school students in Brazil, and limited literacy can prevent students from succeeding later in their careers. In 2019, the state of Espírito Santo piloted an artificial intelligence (AI) platform called Letrus to give public school students rapid feedback on their writing as they prepared for the national university admissions exam. A randomized evaluation found that the intervention led to improvements in students' writing abilities and increased their scores on the entrance exam, helping to narrow the achievement gap between public and private school students. Drawing on these findings, the state rolled out the platform to high school seniors statewide, and Letrus is now pursuing partnerships with other state governments across the country.

In Brazil, public school students face significant challenges in developing writing skills essential for success in the national university entrance exam, ENEM.

While Brazil has made progress in school attendance and literacy rates in the last two decades, functional literacy skills remain low.1 On the Programme for International Student Assessment (PISA), a worldwide study of scholastic performance, the average Brazilian 15-year-old scored 413 points on reading in 2018, whereas the average 15-year-old from an OECD country scored 487 points; only two percent of Brazilian students received top marks.2 One barrier to improving language skills is that teachers, who are busy with tasks like preparing lessons and grading, may not have the time to provide students with individualized assistance like in-depth writing feedback. Additionally, students in public schools in Brazil often have less access to tailored resources and learning support than their private school counterparts.

The Brazilian national university entrance exam (Exame Nacional do Ensino Médio, or ENEM), which serves as a gateway to higher education in Brazil, emphasizes reading and writing skills. The gap in scores between public and private school students is significantly larger on the essay section than on other test sections. Disparities in both writing proficiency and university admissions can affect students’ future educational and career prospects.

As students prepared for ENEM in the 2010s, the state government of Espírito Santo recognized the lack of tailored writing support for students and sought an innovative approach to address their specific needs.

Letrus, a Brazilian education technology company, developed an AI-based platform aimed at improving high school students' writing skills by providing them with personalized feedback on their essays. The platform is designed primarily for public school students in their senior year who are preparing for ENEM.

In 2019, the Espírito Santo State Education Department (Sedu) and Letrus partnered with researchers—J-PAL affiliated professor Bruno Ferman (São Paulo School of Economics) together with Flavio Riva and Lycia Silva e Lima—to assess the platform's effectiveness in strengthening the writing skills needed for university admissions, with the goal of addressing the educational disparities between public and private school students. The opportunity to conduct a rigorous test of the platform’s impact on students’ writing skills helped convince the government to pursue the pilot; policymakers sought to ensure that limited public funding for education was being invested in a high-impact program.

The evaluation was conducted in 178 public schools, with approximately 12,000 students in 110 schools receiving access to the Letrus platform. The study tested two versions of this intervention: one providing only AI-driven feedback and another enhancing AI with human feedback. Students in both groups wrote five practice ENEM essays on the Letrus platform and received instant AI-generated feedback on style and syntax. In the “enhanced” version, students also received feedback from human scorers three days later. Students in the comparison group, comprising 68 schools, did not have access to the Letrus platform.

Students in both treatment groups wrote more practice essays, received more and higher-quality feedback, and discussed more essays individually with their teachers than students in the comparison group. Both interventions increased students’ entrance exam scores by about 0.09 standard deviations—equivalent to 17 points out of a possible 1,000 points—closing about nine percent of the public-private school gap in essay scores. The similarity of effects between the two interventions suggested that additional feedback from human scorers did not improve students’ essay scores more than the AI feedback alone. Both versions of the platform increased the number of training essays that students personally discussed with teachers, suggesting that the platform freed up teachers’ time grading essays to give more individualized assistance to students.

This evaluation received generous support from Google.org through J-PAL’s Post-Primary Education Initiative. For more details on the research findings, see the evaluation summary.

Based on these findings, the state of Espírito Santo formalized its partnership with Letrus and expanded access to the platform to high schools statewide. In 2022, the Espírito Santo State Education Department announced that the initiative would be scaled to reach all senior year students in public schools across the state. In announcing this decision, the Department cited the platform’s proven impact on student test scores. The Department chose to scale the AI-only model due both to its ability to achieve similar impacts at a lower cost and its prompt feedback mechanism, which was seen as a key factor in engaging students.

Despite challenges posed by the pandemic, when students lost access to school infrastructure, the Letrus platform has since been widely adopted. Since 2020, more than 100,000 high school seniors have been enrolled in the platform, including over 28,000 students in 2024. In 2022, an average of 50 percent of students across the state submitted essays through the platform, rising to 60 percent in 2023. The program is now included in the state’s budget and fully integrated into the curriculum for high school seniors, demonstrating the state’s commitment to enhancing educational outcomes through innovative technology.

Letrus is also in the process of developing partnerships with other Brazilian states. It cites results from the evaluation when approaching new state governments and their education departments to propose partnerships. Through support from foundations, the states of Mato Grosso do Sul and Alagoas adopted the Letrus platform in some schools in 2021, followed by Paraíba and Pará in 2022. In 2024, Letrus secured direct partnerships with the states of Mato Grosso and Goiás.

Letrus has secured funding from USAID’s Development Innovation Ventures to develop a new randomized evaluation to study longer-term outcomes such as university enrollment and employment. The implementation is expected to run from 2024 to 2026 and include students from all public high school grades, expanding beyond the 2019 study's focus on seniors. 100,000 students are anticipated to receive the intervention as part of the study. The partnership with USAID will take place in the state of Ceará.

"The implementation of a writing platform for all students enrolled in their senior year of high school in our public education network reaffirms the commitment of the Government of the State of Espírito Santo, through its State Department of Education—Sedu—to continuously invest in innovative actions that positively impact the learning and future of these young people. Since 2019, this initiative has already benefited more than 60,000 students, proving to be a strong ally in promoting the development of writing and reading skills of the network's students."

— Statement from Sedu

Ferman, Bruno, Lycia Lima, and Flávio Rima. “Artificial Intelligence, Teacher Tasks and Individualized Pedagogy.” Working Paper, March 2021

“Brazil programme awarded UNESCO Prize for using AI to improve writing skills,” UNESCO, May 18, 2020, https://www.unesco.org/en/articles/brazil-programme-awarded-unesco-prize-using-ai-improve-writing-skills.

“Letrus Writing Skills Program, Letrus (Centro de Autoria e Cultura LTDA): improving students’ writing skills through using artificial intelligence,” UNESCO, 2021, https://unesdoc.unesco.org/ark:/48223/pf0000380194.

Artificial intelligence (AI) has the potential to support teachers in completing time-intensive, subjective tasks, such as grading essays, and to provide individualized feedback to students on their writing. However, evidence on whether and how AI impacts students’ writing skills remains limited. To fill this gap, researchers evaluated the learning impacts of using AI systems to score and comment on essays written for Brazil’s national post-secondary admission exam. In schools where AI technology was introduced, teachers were able to provide more frequent individualized feedback to students, and students’ essay scores improved as a result.

Improving learning is one of the most pressing goals for educational policy in low- and middle-income countries. A common barrier to learning is that teachers are time-constrained and often have to juggle between providing individualized assistance to students and performing routine tasks, like grading. This is especially true for the development of writing skills, which requires teachers to spend long hours grading.

Educational technologies (ed techs) could help alleviate this challenge by performing the operational parts of teaching and allowing teachers to reallocate time from less to more complex tasks (e.g., one-on-one feedback to students). For example, automated writing evaluation (AWE) systems can potentially help improve writing by using natural language processing and machine learning algorithms to predict scores and allocate feedback, thereby reducing the amount of time teachers need to spend on grading. However, there is little evidence on the effectiveness of ed techs or artificial intelligence (AI) focused on writing. Are AWE systems effective in improving students’ writing skills?

Providing high-quality education is a challenge in Brazil, especially when it comes to language skills. According to the 2018 PISA exam, a worldwide study of students’ scholastic performance, the average 15-year-old Brazilian student scored 413 points on reading, compared to an average of 487 points in all OECD countries.1

In response to the need for higher-quality education in Brazil, the implementing partner in this evaluation was launched in 2015 with the mission of improving literacy and writing skills among school-aged youth by applying artificial intelligence to linguistics in public schools. Its main product was a pedagogical program that provided feedback on writing skills to students, using an automated writing evaluation (AWE) system combined with validation of feedback by human essay graders. The AWE system was embedded in an online platform that granted students access to in-classroom practice opportunities for the essay of the National Secondary Education Exam (Exame Nacional do Ensino Médio, ENEM).

ENEM is the second largest college admission exam in the world. It has been increasingly used as an entry exam by many post-secondary institutions in Brazil, and the essay portion of it accounts for the largest share of the public-private school achievement gap in ENEM scores. In allowing teachers to reallocate their time from grading to providing more tailored assistance to students, the AWE system could potentially improve students’ writing skills and their performance on the exam, helping close the opportunity gap between public and private-school students.

One of the advantages of the provider’s AWE technologies was that they were based on a platform that worked well with poor internet connections. Given the low cost of sharing online access to automated essay scoring, this algorithm could represent a cost-effective way of improving writing skills among school-aged youth, even in contexts of low internet connectivity.

Researchers partnered with the implementer to measure the impacts of two AWE-based programs on students’ writing skills. The evaluation took place in 178 public schools with about 19,000 students who had computer access in the state of Espírito Santo. Schools were randomly assigned to one of three groups:

The State’s Education Department selected schools to participate in the evaluation based on a 2017 survey on proneness to online technology adaptation. These 178 schools received 8,000 laptops between February and April of 2019 to ensure adequate computer availability for the implementation of the ed techs, regardless of whether they received the intervention.

The primary goal of the evaluation was to document the impacts of the two ed tech systems on ENEM essay scores. By comparing the two interventions, researchers assessed if incorporating additional inputs from humans improved grading and feedback quality on aspects in which AI may fall short. Given that the human grading component is expensive and challenging to scale up, it was important to understand if the potential benefits of the enhanced AWE system relative to the pure intervention were worth their cost.

Teachers shifted their work hours from routine (e.g., searching for orthographic mistakes) toward nonroutine tasks (e.g., providing individual assistance on essay consistency) with the adoption of both the enhanced and pure AWE systems, and students' essay scores improved as a result. Having human graders as a resource to improve grading and feedback quality did not lead to larger improvements in essay scores than the AWE system alone, despite increasing perceived feedback quality.

Take-up: Take-up was high and similar across the two ed tech systems for both teachers and students. In the two intervention groups, more than 95 percent of teachers used the ed techs to assign and collect essays in each of the five essay practices. Student compliance was also similar across interventions and relatively high. For each writing activity, 75 to 80 percent of students enrolled in the intervention schools submitted essays through the platform.

Impact on essay practice and quantity and quality of feedback received: Students in both intervention groups wrote more training essays, received more feedback on their essays, and perceived the feedback received as higher -quality. Students increased their essay practice and wrote 1.4 more training essays when using the enhanced AWE ed tech (a 29 percent increase relative to the baseline of 4.9 essays) and 1.6 more essays when using the pure AWE ed tech (a 32 percent increase) relative to the comparison group. In addition to practicing more, students in both interventions benefited from comments and notes in about 1.3 more essays (a 40 percent increase relative to 3.4 essays on average in the comparison group) and received a grade on an additional 1.6-1.7 essays (an increase of about 45 percent).

Students exposed to either of the two ed techs were also 6-7 percentage points more likely to find comments and annotations somewhat useful (from an average of 81 percent in the comparison group), but only those using the enhanced AWE ed tech were more likely to find the comments on their essays very useful (a 6 percentage point increase relative to a base of 44 percent in the comparison group). The difference in effects on perceived feedback quality between the two groups was meaningful, suggesting that the human graders in the enhanced AWE system did contribute to higher feedback quality.

Impact on teachers’ pedagogy and time allocation: Students using either of the two ed techs discussed roughly 35 percent more essays individually with their teachers after they received grades. However, only teachers in the enhanced AWE group reported having more time to cover the topics of writing, grammar, and literature in school. In line with these results, the share of teachers who felt their time was very insufficient dropped from 23 percent in the comparison group to 9 percent for teachers using the enhanced AWE system, but remained roughly unchanged for teachers using the pure AWE ed tech. Taken together, these findings suggest that teachers in the enhanced AWE group delegated part of their gradings tasks to human graders, while teachers in the pure AWE arm were able to keep pace by taking over some of these tasks, without increasing their usual workload.

Impact on essay scores and writing skills: The enhanced and the pure AWE interventions had positive and almost identical effects on the full ENEM essay score and led to similar improvements in scores on the writing skills evaluated by official graders, except for analytic skills. Both interventions increased the full essay score by about 0.09 standard deviations, mitigating an estimated 9 percent of the public-private school gap in essay scores. Specifically, syntactic skills scores improved by 0.07 and 0.06 standard deviations for students in the enhanced and pure AWE interventions, respectively; the policy proposal grade increased by 0.16 standard deviations with the enhanced AWE ed tech and 0.14 standard deviations with the pure AWE ed tech; and the analytic skills scores increased by 0.06 standard deviations with the pure AWE ed tech, but was not affected by the enhanced AWE intervention.

The similarity in effects across interventions suggests that the additional inputs from human graders did not change the extent to which the ed techs were able to improve scores. While this was expected for syntactic skills, which are graded based on standardized processes (e.g., parsing content and catching errors), it was not anticipated for the more complex parts of the exam (e.g., analytical skills and the policy proposal grade). Likely, the shifts in time allocation allowed teachers to fill in some of the gaps or limitations of the pure AWE ed tech relative to the enhanced platform.

In short, the study presents evidence that artificial intelligence can help overcome bottlenecks that prevent the accumulation of writing skills; in particular, teachers’ time constraints to provide individualized assistance to students. Pure and enhanced AWE ed techs led to similar effects on essay scores and writing skills, suggesting that including human scorers was not necessary to attain the same results. This makes the intervention less costly and easier to scale.

From 2020 onwards, the results from this study were used by the State Education Department of Espírito Santo to justify procuring the pure AWE tool. The program is currently a public policy for senior high school students in the state, potentially benefiting close to 30,000 senior high school students every year.

“PISA 2018 Results: Combined Executive Summaries, Volume I, II and III”, p. 18 (Table I.1, 2/2) Available at: https://www.oecd.org/pisa/Combined_Executive_Summaries_PISA_2018.pdf