Take practical steps to de-identifying and publishing research data with J-PAL’s new guides

We are pleased to announce the publication of two new methods guides to de-identifying and publishing research data. These guides draw on J-PAL’s experience of publishing research data on randomized evaluations in the social sciences for more than a decade. They provide practical advice for students, researchers, and anyone else publishing their own or others’ data.

Researchers who plan to publish their data should take every effort to minimize the risk of re-identification of their study participants, as is commonly required by ethical standards, IRB protocols, and legal requirements. This is done through a process known as de-identification, in which variables that could be used to identify individuals are masked through techniques such as aggregation or encoding, or removed from the dataset altogether.

About the guides

The Guide to Publishing Research Data includes:

- A list of considerations to make before publishing data, such as what information was provided to study participants and the IRB, the sensitivity of the data collected, and legal requirements

- Sample consent form language that will allow future publication of de-identified data

- A checklist for preparing data for publication

- And more

The accompanying Guide to De-Identifying Data approaches de-identification as a process that reduces the risk of identifying individuals. It includes:

- An overview of personally identifiable information (PII) and the responsibility of data users not to use data to try to identify human subjects

- Recommendations for handling direct identifiers (such as full name, social security number, or phone number), as well as indirect identifiers (such as month/year of birth, nationality, or gender)

- Guidance on de-identification steps to take throughout the research process, such as encrypting all data containing identifying information as soon as possible

- A list of common identifiers, including those labeled by the United States’ Health Insurance Portability and Accountability Act (HIPAA) guidelines as direct identifiers

- And more

Why publish de-identified research data

Increasing the availability of research data benefits researchers, policy partners who supported the studies, students who learn from using the data, and, importantly, the people from whom the data was collected. Data sharing can provide many benefits and opportunities to the research community, including:

- Allowing for re-use of the data by researchers, policymakers, students, and teachers around the world

- Providing opportunities for new research, such as meta-analyses and questions on external validity and generalizability of results

- Enabling the replication and confirmation of published results as well as sensitivity or complementary analyses

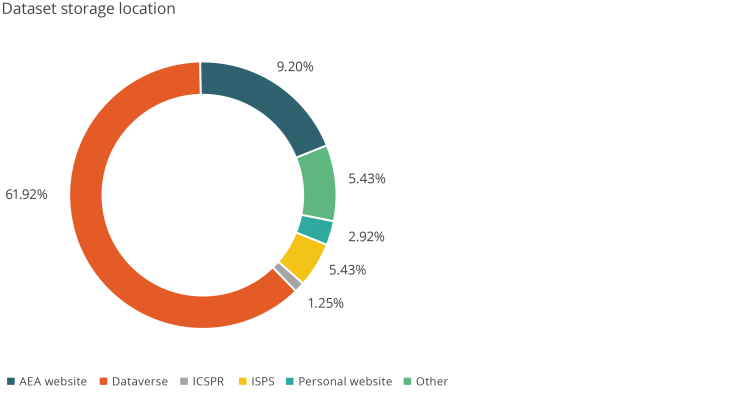

J-PAL has been committed to making research more transparent for over a decade and supports the publication of de-identified research data in a digital repository such as J-PAL and IPA’s Datahub for Field Experiments in Economics and Public Policy, the Harvard Institute for Quantitative Social Sciences Dataverse, the Inter-university Consortium for Political and Social Research at the University of Michigan, or the Yale Institution for Social and Policy Studies Data Archive.

For more on J-PAL’s work in increasing transparency in research, please see here.

Some text in this post is excerpted from the J-PAL Guide to De-Identifying Data and the J-PAL Guide to Publishing Research Data.

Empirical work in the social sciences often uses primary data collected by researchers, or other data that is not yet publicly available. Researchers spend lots of time and money to collect these data; yet after the publication of the paper, the data frequently sits in their files, rarely revisited and slowly buried, unless it is published and shared.

There has been a growing research transparency movement within the social sciences to encourage broader data publication. In this blog post we share some background on this movement and recent statistics, key factors for researchers to consider before publishing data, and tools and resources to support data publication efforts.

The research transparency movement

The notion of “open data”--the concept that data collected by researchers should be shared publicly without costs incurred by secondhand users--has been promoted by a handful of social science institutions, including the Inter-university Consortium for Political and Social Research (ICPSR), the Open Science Framework (OSF), and the Harvard Dataverse, hosted by the Institute for Quantitative Social Sciences (IQSS).

Other interesting projects (like Code Ocean and Two Ravens) have been developed to facilitate replication, data exploration, and analysis of published data. These open data initiatives aim to reduce the costs incurred by researchers in sharing their data.

Many social science journals have supported open data by putting data publication policies in place. Economics and political science journals are most likely to require authors to submit both code and data (Table 1). (Submitted code often takes the form of either the final code file that runs analyses on cleaned data, or a set of multiple files which include the final analysis code as well as code that cleaned the raw data and produced final estimation data.)

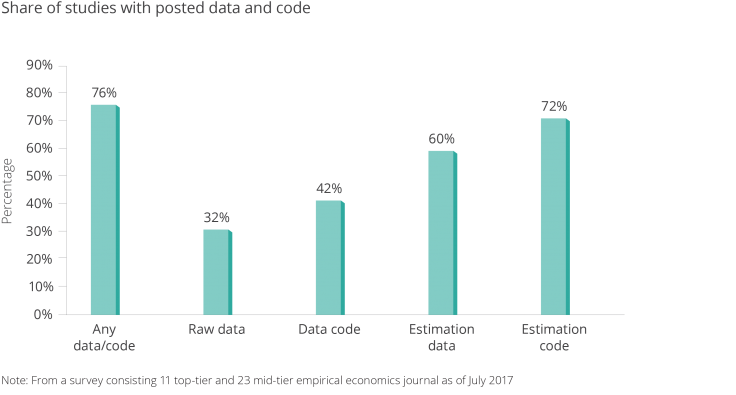

Back in May 2016, J-PAL affiliate Paul Gertler, with Sebastian Galiani and Mauricio Romero, authored a paper that looked at articles published in the previous three issues of nine leading economics journals to determine how many articles actually included data publication. The authors found that of 203 empirical papers published, 76 percent published at least some data or code (Figure 1). About one-third of the studies published raw data/code, while two-thirds published final analysis data/code. (Raw data can be more beneficial to other researchers’ work since it often includes more measures than appear in the final analysis.)

Table 1: Journal Policies on Posting Data and Code.1

(Top tier) Economics

(Mid tier) Political Science Sociology Psychology General Science Journals Analyzed 11 23 10 10 10 3 Code/Data required before publication 10 8 8 2 1 3 Code/Data optional/encourages 1 9 0 2 2 0 Raw data must be submitted 10 7 0 0 0 0 Code/Data verified before publication 0 0 3 0 0 0

Note: From a survey consisting of 11 top-tier and 23 mid-tier empirical economics journals as of July 2017.

Figure 1. Share of Studies with Posted Data and Code.

Note: from a survey consisting 11 top-tier and 23 mid-tier empirical economics journal as of July 2017

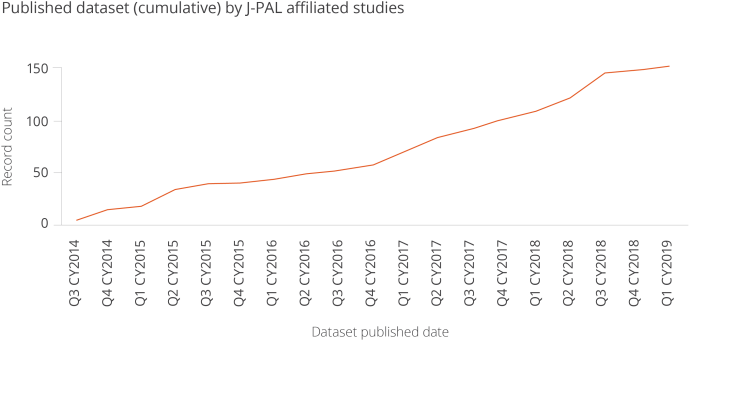

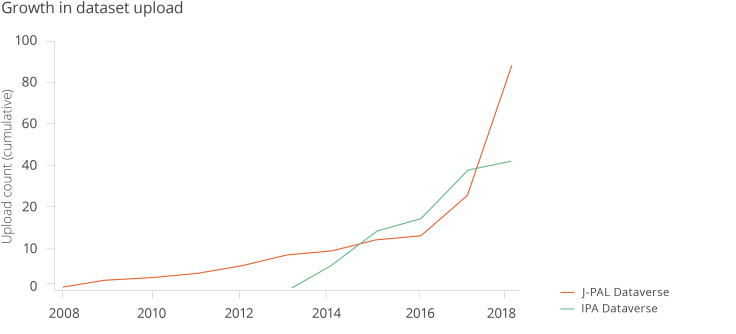

The chart below shows the rapid growth in the number of dataset published by J-PAL affiliates, by quarter.

Figure 6. Published Dataset (cumulative) by J-PAL affiliated studies

Figure 7. Dataset Storage Location

While these numbers are promising, there is room for growth. Despite journals’ data publication policies, an increasing number of authors are claiming exemptions. From 2005 to 2016, although the proportion of papers with data submitted to the American Economic Review Papers and Proceedings rose from 60 percent to around 70-80 percent, the proportion of papers submitted which received exemptions from the data publication policy rose from 10 percent to more than 40 percent.3

Authors cite confidentiality issues as the primary reason for their inability to publish data, especially for studies that deal with sensitive information. Intellectual property concerns are the second most-cited reason for requesting exemptions to data publication policies.

With these concerns in mind, authors may perceive that the benefits of data publication do not outweigh its perceived costs and risks.

For example, authors may worry that sharing data could result in losing full control of the data , other researchers using the data for similar research when the original author’s paper is not yet published, or perhaps outside parties digging for errors in the data analysis. Private data providers, especially for-profit companies, are often unwilling to relinquish valuable proprietary data to the public out of concerns that competitors might gain from it.

These risks are valid, but can be minimized by managing permission settings for reuse when there are concerns about malicious users, and avoiding misinterpretation by showing transparency in the research methods used.

The benefits of publishing data

There are many long-run benefits to publishing original research data. Open data can increase visibility of the research and number of citations counts. For example, there is some evidence that publishing research articles for open access, rather than behind a paywall, increases citations.4

Similarly, a preliminary paper by J-PAL affiliate Ted Miguel, with Garret Christensen and Allan Dafoe, concluded that papers in top economics and political science journals with public data and code are cited between 30-45 percent more often than papers without public data and code. It is plausible that open data platforms such as the Harvard Dataverse lead to greater visibility for the researcher, as users who browse or download a dataset are likely to see the associated study or paper.

More importantly, open data is a public good. The availability of data benefits not only researchers, but policy partners who supported the studies, students who learn from using the data, and - importantly - the people from whom the data was collected (though much more work is needed to better inform and educate study participants and members of the public on effective use of open data). Even further, open data enables government agencies to use data that otherwise is costly to obtain.

Open data has the potential to generate new ideas and spark new collaborations between researchers and policymakers--but it only serves this purpose when others are actually reusing the data. For example open data becomes a public good when data are reused for:

- Research (reanalysis, meta-analysis, secondary analysis, replication)

- Teaching (curriculum use for presentations and assignments)

- Learning (dataset exploration)

The J-PAL Dataverse, a subset dataverse in the Harvard Dataverse, is an open data repository which stores data associated with studies conducted by J-PAL affiliated researchers.

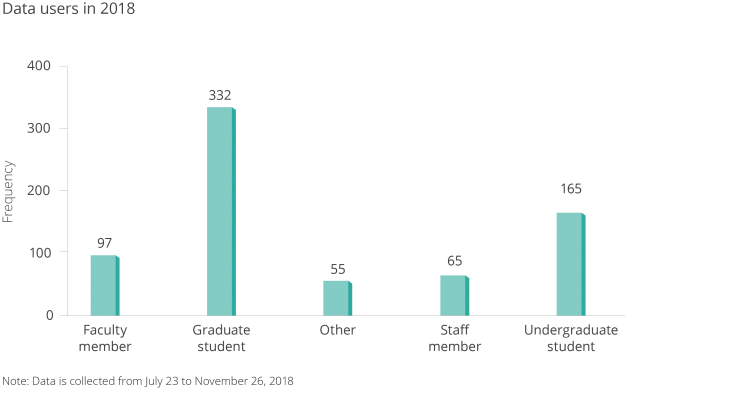

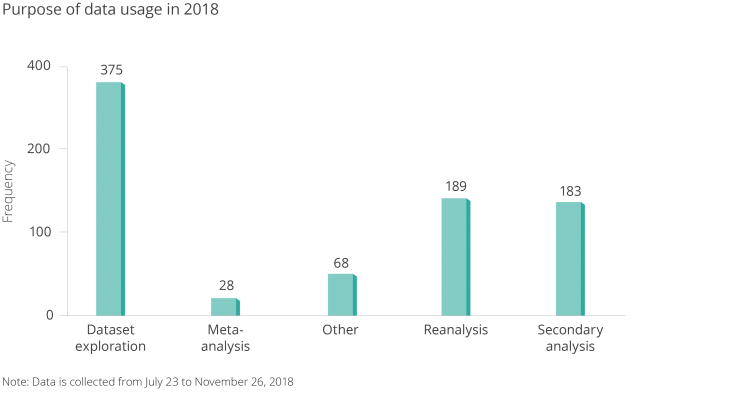

We collected data from our database in J-PAL Dataverse users using a guestbook to better understand who was accessing this open data, and for what purpose.

Figure 4. Data Users of J-PAL Dataverse.

Note: Data is collected from July 23 to November 26, 2018.

Figure 5. Purpose of Downloaded Dataset

Note: Data is collected from July 23 to November 26, 2018.

I’m a researcher--how can I start publishing my data?

You can get credit for your hard work collecting data, and contribute to the public good, by making data public. It is worth the effort: Researchers and others often do reuse data and appreciate the effort that goes into cleaning it and curating the code.

What are important considerations for researchers before publishing data?

- If a donor is funding the research, what are their data publication requirements? Some may require publication of just the data that are part of the analysis, and some may require all data collected to be published.

- If your dataset includes administrative data from a third-party organization, are there any data user agreements in place (DUA) that prevent you from publishing the data? Could you go back to the data provider and talk about publication? If you are you writing a new DUA at the beginning of a project, can you include a mandate for data publication?

- If your dataset includes survey data, does your informed consent clause allow publication? Who did you tell your survey participants that you would be sharing the data with? How did you tell them their responses would be managed? ICPSR has several good recommendations for informed consent clauses.

- Does your institutional review board (IRB) protocol have stipulations regarding data publication? Did you mention data publication as part of your original protocol? Can you go back to your IRB for approval of data publication?

- Where will you publish your data? Are there associated fees with the repository? Does your donor mandate a specific repository?

- How sensitive is your data? Does your data contain information regarding individuals' financial, health, education, or criminal records? If so, have you considered releasing the data in a restricted repository (similar to ICPSR’s data enclaves) where researchers can only access it through DUAs and additional agreements about usage?

- Have you thoroughly evaluated the risk to participants? What efforts have you made to reduce the risk of identifying individuals in the study? Have personal identifiers been removed from the data? Could someone potentially link several indirect identifiers (such as gender, age, and address/zip code) to identify individual in your study?

- For example, in dataset that contains sensitive information about individuals, there is a tradeoff between utilizability of data and the privacy of human subjects. Protecting individuals should be the main priority.

- Are the data and code clearly labeled and clean? Would a third party be able to review, read, and run your code?

- Have you clearly documented how the data is organized, how it was collected, what particular variables or observations were dropped or cleaned, and how to use the data and run the code?

Researchers should have a clear answer for each question prior to data publication in order to ensure ethical and responsible use.

An increasing number of research and donor institutions have listed data publication as a condition for grant funding.

J-PAL’s data publication policy requires evaluations funded by our research initiatives to share their data and code in a trusted digital repository (more details are in J-PAL's Guidelines for Data Publication).

We’ve worked closely with our affiliates on curating their data and code for publication since our efforts to increase research transparency began in earnest in June 2015. This work includes cleaning code, labeling datasets, ensuring that personal information is removed or masked, documenting metadata, and publishing datasets themselves. The J-PAL Dataverse has the benefit (over a regular website like a faculty page, for example) of assigning a permanent digital object identifier (DOI) to a dataset for consistent citation, and storing the data in perpetuity through consistent URLs.

Tools and resources

J-PAL and our partner organization Innovations for Poverty Action (IPA) have created resources to help researchers publish their data and improve research transparency. IPA’s best practices for data and code management illustrate good coding practices that can be used to help clean and finalize your data and code before publication. J-PAL North America’s data security procedures for researchers provide context on elements of data security and working with individual-level administrative and survey data.

With this in mind, we’re always working on new resources to support research transparency. Have an idea? Email me at krubio [at] povertyactionlab [dot] org.

To learn more about our work to promote research transparency, visit www.povertyactionlab.org/rt.

References:

Galiani, Sebastian, Paul Gertler, and Mauricio Romero. Incentives for Replication in Economics. Tech. rept. National Bureau of Economic Research.

Christensen, Garret, and Edward Miguel. 2018. "Transparency, Reproducibility, and the Credibility of Economics Research." Journal of Economic Literature, 56 (3): 920-80.

Tennant, J.P., Waldner, F., Jacques, D. C., Masuzzo, P., Collister, L. B., & Hartgerink, C. H. (2016). The academic, economic and societal impacts of Open Access: an evidence-based review. F1000Research, 5, 632. doi:10.12688/f1000research.8460.3

1 Galiani, Sebastian, Paul J. Gertler, and Mauricio Romero. “Incentives for Replication in Economics.” SSRN Electronic Journal, 2017, 4. https://doi.org/10.2139/ssrn.2999062. 2 Galiani et. al, 5. 3 Christensen, Garret, and Edward Miguel. “Transparency, Reproducibility, and the Credibility of Economics Research.” Journal of Economic Literature 56, no. 3 (September 2018): 937. https://doi.org/10.1257/jel.20171350. 4 Tennant, Jonathan P., François Waldner, Damien C. Jacques, Paola Masuzzo, Lauren B. Collister, and Chris. H. J. Hartgerink. “The Academic, Economic and Societal Impacts of Open Access: An Evidence-Based Review.” F1000Research 5 (September 21, 2016): 632. https://doi.org/10.12688/f1000research.8460.3.Today, J-PAL and IPA are excited to announce the creation of the new Datahub for Field Experiments in Economics and Public Policy. With this new dataverse, we will be housing all datasets published on The Dataverse Project by our respective organizations in one centralized location.

Our organizations have made impressive strides in recent years in publishing data: we have made available more than 140 datasets from studies about poverty and development conducted by our researchers around the world. This joint effort will bring together our two dataverses. In doing so, we aim to facilitate the search and discovery of new and existing datasets pertaining to field experiments carried out by our organizations and affiliated researchers.

This dataverse will strengthen IPA and J-PAL’s commitment to research transparency, specifically data publication, and facilitate the reuse of research data. It will also enable us to increase our efforts in building more consistent and robust metadata. The dataverse is open to J-PAL/IPA partner organizations interested in joining and supporting further expansion of these efforts.

Anyone can visit the Datahub to search for and download existing datasets to support their own research or replicate existing research. Most datasets include original data from the study; programming code written to run the analysis; instructional documentation on the data, code, and variables; and the survey documents used to collect the data. We outlined a few reasons why researchers should publish their data in a previous blog post earlier this year.

Some examples of published data include:

Recently published

-

Measuring Productivity: Lessons from Tailored Surveys and Productivity Benchmarking. Data and code for a study that explores different ways to measure productivity. It uses detailed data from 219 rug-making firms in Fowa, Egypt collected from 2010 to 2014 to explore how well these firms produce output, revenue and quality. They document how these varied productivity measures compare to the most commonly used measures in the literature.

-

Clinical Decision Support for High-Cost Imaging: a Randomized Clinical Trial. Data collected from health providers who ordered low-cost imaging scanners from November 2015 to October 2016. The code analyzes the effect of a software that provides information on high-cost images on the amount of scan orders by health providers.

-

Follow the money not the cash: Comparing methods for identifying consumption and investment responses to a liquidity shock Data, code and surveys used for data collection to measure the impacts of liquidity shocks on spending in a 2010-2015 study out of the Filipino cities Manila and Luzon.

-

Getting to the Top of Mind: How Reminders Increase Savings Data and code for the publication tables are included for this study on the ability of reminder messages to increase commitment attainment for recently opened savings-account holders. This study was conducted from 2006-2008 in a mix of rural and urban areas within the Philippines, Bolivia and Peru.

Most downloaded

-

The Diffusion of Microfinance. A dataset containing information on the demographic and social network in 43 villages from 2006 to 2007 in South India before and after an introduction of microfinance. The code is used to answer how participation in a microfinance program diffuses through social networks.

-

Measuring the Impact of Microfinance in Hyderabad, India. A dataset that provides information on 2,800 households living in slums in Hyderabad, Andhra Pradesh from 2005 to 2007. Information was collected on household composition, education, employment, asset ownership, decision-making, expenditure, borrowing, saving, and any businesses currently operated by the household or stopped within the last year.

-

A Multifaceted Program Causes Lasting Progress for the Very Poor: Evidence From Six Countries A dataset that provides results from six randomized control trials of an integrated approach to improve livelihoods among the poor in 6 countries: Peru, Honduras, Ghana, Ethiopia, Pakistan and India from 2007-2014. Information was collected on consumption, food security, assets, finance, time use, income and revenues, mental health, and women’s decision-making.

-

Underinvestment in a Profitables Technology: the case of Seasonal Migration in Bangladesh A dataset containing information on the results of a 2008-2011 financial intervention in northwestern Bangladesh intended to promote out-migration to nearby urban areas during the lean season before harvest in order to mitigate famine.

Randomized evaluations can provide credible, transparent, and easy-to-explain evidence of a program’s impact. But in order to do so, adequate statistical power and a sufficiently large sample are essential.

Randomized evaluations can provide credible, transparent, and easy-to-explain evidence of a program’s impact. But in order to do so, adequate statistical power and a sufficiently large sample are essential.

The statistical power of an evaluation reflects how likely we are to detect any meaningful changes in an outcome of interest (like test scores or health behaviors) brought about by a successful program. Without adequate power, an evaluation may not teach us much. An underpowered randomized evaluation may consume substantial time and monetary resources while providing little useful information, or worse, tarnishing the reputation of a (potentially effective) program.

What should policymakers and practitioners keep in mind to ensure that an evaluation is high powered? Read our six rules of thumb for determining sample size and statistical power:

Rule of thumb #1: A larger sample increases the statistical power of the evaluation.

When designing an evaluation, researchers must determine the number of participants from the larger population to include in their sample. Larger samples are more likely to be representative of the original population (see figure below) and are more likely to capture impacts that would occur in the population. Additionally, larger samples increase the precision of impact estimates and the statistical power of the evaluation.

Rule of thumb #2: If the effect size of a program is likely to be small, the evaluation needs a larger sample to achieve a given level of power.

The effect size of an intervention is the magnitude of the impact of the intervention on a particular outcome of interest. For example, the effect of a tutoring program on students might be a three percent increase in math test scores. If a program has large effects, these can be precisely detected with smaller samples, while smaller effects require larger sample sizes. A larger sample reduces uncertainty in our findings, which gives us more confidence that the detected effects (even if they’re small) can be attributed to the program itself and not random chance.

Rule of thumb #3: An evaluation of a program with a low participation rate needs a larger sample.

Randomized evaluations are designed to detect the average effect of a program over the entire treatment group. However, imagine that only half the people in the treatment group actually participate in the program. This low participation rate decreases the magnitude of the average effect of the program. Since a larger sample is required to detect a smaller effect (see rule of thumb #2), it is important to plan ahead if low take-up is anticipated and run the evaluation with a larger sample.

Rule of thumb #4: If the underlying population has high variation in outcomes, the evaluation needs a larger sample.

In a given evaluation sample, there may be high or low variation in characteristics that are relevant to the program in question. For example, consider a program designed to reduce obesity, as measured by Body Mass Index (BMI), among participants. If the population has roughly similar BMIs, on average at program start, it is easier to identify the causal impact of the program among treatment group participants. You can be fairly confident that absent the program, most members of the treatment group would have seen similar changes in BMI over time.

However, if participants have wide variation in BMIs at program start, it becomes harder to isolate the program’s effects. The group’s average BMI might have changed due to naturally occurring variation within the sample, rather than as a result of the program itself. Especially when running an evaluation of a population with high variance, selecting a larger sample increases the likelihood that you will be able to distinguish the impact of the program from the impact of naturally occurring variation in key outcome measures.

Rule of thumb #5: For a given sample size, power is maximized when the sample is equally split between the treatment and control group.

To achieve maximum power, the sample should be evenly divided between treatment and control groups. If you add participants to the study, power will increase regardless of whether you add them to the treatment or control group because the overall sample size is increasing. However, the most efficient way to increase power when expanding the sample size is to add participants to achieve or maintain balance between the treatment and control groups.

Rule of thumb #6: For a given sample size, randomizing at the cluster level as opposed to the individual level reduces the power of the evaluation. The more similar the outcomes of individuals within clusters are, the larger the sample needs to be.

When designing an evaluation, the research team must choose the unit of randomization. The unit of randomization can be an individual participant or a “cluster.” Clusters represent groups of individuals (such as households, neighborhoods, or cities) that are treated as distinct units, and each cluster is randomly assigned to the treatment or control group.

For a given sample size, randomizing clusters as opposed to individuals decreases the power of the study. Usually, the number of clusters is a bigger determinant of power than the number of people per cluster. Therefore, if you are looking to increase your sample size, and individuals within a cluster are similar to each other on the outcome of interest, the most efficient way to increase the power of the evaluation is to increase the number of clusters rather than increasing the number of people per cluster. For instance, in the obesity program example from rule of thumb #4, adding more households would be a more efficient way to increase power than increasing the number of individuals per household, assuming individuals within households have similar BMI measures.

Still have questions? Read our short publication, which goes into further detail on how to follow these rules of thumb to ensure that your evaluation has adequate power.