Strengthening randomized evaluations through incorporating qualitative research, Part 1

Randomized evaluations allow researchers to measure the impact of programs and policies on a range of outcomes. Using this approach in North America, J-PAL researchers have recently examined a wide range of topics, including the effects of Medicaid on rates of health care utilization and the impact of a housing mobility program on the likelihood of families moving to lower-poverty neighborhoods.

But what mechanisms are driving the effects of these programs and policies? How did the context, design, and implementation of the program or policy influence the result? If replicated in a different context, will the program have the same effects? Is the study asking the right question?

Researchers can often collect quantitative data and design evaluations to shed light on these types of questions, but there’s always more to learn. Qualitative methods, such as direct observation, in-depth interviews, and focus groups, allow researchers to dive into these questions by examining participants’ beliefs, attitudes, experiences, and perspectives. Data gleaned from these methods can help researchers gain insight into potential mechanisms or barriers, generate new hypotheses and questions, and understand the stories behind the quantitative results.

For decades, social science scholars within anthropology, sociology, and psychology have employed qualitative methods. In recent years, many researchers within the traditionally quantitative field of economics have also incorporated qualitative methods into their studies and built teams with qualitative expertise to strengthen their research.

From our conversations with several researchers who've conducted and relied on qualitative research methods as part of J-PAL-supported randomized evaluations, we've summarized a few practical tips for those interested in integrating a qualitative approach into their studies:

- While developing your randomized evaluation, don't discount questions that can be best addressed through qualitative methods. These questions may challenge certain assumptions or shed light on mechanisms, contexts, or outcomes that quantitative methods may not fully capture. For example, researchers may want to gain insight into the experience of staff implementing a particular program to identify the challenges and barriers they faced, understand their perception of the program’s successes or shortcomings, and identify potential obstacles to longer-term implementation or scale-up. While this may be difficult to assess in a survey, focus groups and qualitative interviews could provide valuable insights.

- Account for qualitative research in study proposals and budgets. Qualitative research can require a high time commitment and can benefit from the support of specialized team members.

- Cultivate relationships with implementing partners. Forming a strong relationship with implementing partners is one key component to a successful and policy-relevant study and can help build a foundation for conducting qualitative research. Implementing organizations interact closely with study participants and often play instrumental roles in shaping the design and implementation of randomized evaluations. They are also well-placed to help researchers determine the best approaches to carrying out the qualitative parts of a study.

- Diversify your research team. Consider building a research team of individuals from different disciplines. Scholars of psychology, anthropology, sociology, and social work often have extensive experience with qualitative methods and bring valued perspectives that economists may be missing.

This blog series highlights three examples of J-PAL research teams using qualitative research methods to inform and strengthen the design, implementation, and analysis of their randomized evaluations. For part two of the series, we interviewed Professor of Public Policy and US Health Care Delivery Initiative Co-Chair Dr. Marcella Alsan about how qualitative research helped motivate and shaped the central question and hypothesis for a study on racial concordance between physicians and patients. In part three, we spoke with Professor of Sociology & Social Policy Stefanie Deluca about how the Creating Moves to Opportunity randomized evaluation, a study she co-led, embedded qualitative research methods into its study design. Part four features a conversation with Associate Professor of Social Work and Oregon Health Insurance Experiment co-author, Heidi Allen, on how qualitative research helped the research team make sense of some of the study’s results. The series concludes with part five, where we spoke with researchers from the the Baby's First Years study about the value of qualitative research in providing a deeper understanding of mothers' experiences.

J-PAL affiliates Marcella Alsan and Amy Finkelstein highlight four key benefits of randomized evaluations that are useful for addressing pressing health policy questions, drawing from their recent Milbank Quarterly article.

The medical community has long valued medical randomized controlled trials (RCTS) for their ability to tell us whether medicines cause any improvement (or worsening) of health and safety. Take the Covid-19 vaccines: manufacturers have used RCTs to determine the safety and efficacy of their vaccines.

RCTs are also well-known for being able to determine the effect of a health care program or policy, but they are not a one-trick-pony. As we (Alsan and Finkelstein) highlight in our new Milbank Quarterly article, RCTs have (at least) four additional benefits that are useful for addressing pressing health policy questions.

RCTs empower us to answer the questions we want to answer

RCTs allow researchers, practitioners, and policymakers to study the questions that they want to study, rather than having to limit themselves to what they can study based on naturally occurring variation that already exists.

One example is the 2017 Oakland study on diversity in the physician workforce. Researchers were interested in the effect of a physician’s race concordance between patient and physician on their demand for health services, among Black men. Identifying this effect with observational data would be difficult because most individuals choose their primary care doctor, and many of the most vulnerable individuals do not have a primary care doctor at all. Additionally, a lack of diversity in the physician workforce makes it hard for patients to find doctors that look like them.

Researchers overcame these challenges by randomizing Black male patients to receive either a Black or non-Black doctor and studied how such assignment affected demand for preventative care. The study found that Black male patients who were randomly assigned to a Black provider were eighteen percentage points more likely to undertake preventative care.

RCTs can test theory

A critique of many studies–including some RCTs–is that they don’t illuminate “why” a program or policy had or didn’t have an impact. In some cases, RCTs can be designed to test different theories and pinpoint reasons why a program or policy was effective or not.

One example of researchers designing an RCT in this manner is a 2010 study on the impact of immunization camps and incentives. A nonprofit, Seva Mandir, aimed to increase child immunization rates in rural Udaipur, India. The researchers designed the evaluation to determine why immunization rates were so low. One potential barrier was that clinics that offered vaccines were often closed. Another possibility was that it was challenging for families to go to the clinics for the five courses of immunizations and they were not being incentivized enough to go.

The researchers investigated this problem by randomly assigning the community into three groups: a comparison group, a treatment group with an intervention aimed at combating the closed clinics issue, and a second treatment group that also received incentives. Because of this study design, researchers were able to determine that both were significant barriers to child immunization.

RCTs can credibly examine indirect effects

Sometimes a program may be intended to serve one group of people, but it may have positive or negative indirect, or “spillover,” effects on other groups. Large-scale RCTs can be used to credibly measure these spillovers.

Two recent, nationwide RCTs involving the Centers for Medicare & Medicaid Services revealed that there are substantial impacts of Medicare policy on the treatment of non-Medicare patients. In one, a different way of reimbursing for hip and knee replacements for Medicare patients was randomly introduced in some metropolitan areas and not in others. Yet both Medicare patients and privately insured patients were impacted similarly. These results suggest that one insurer’s reforms can have broader effects on the treatment of patients as a whole.

RCTs can facilitate fruitful collaboration

RCTs often necessitate researchers to connect with providers to design and implement a study, strengthening research designs. The collaboration between researchers and the organizations that implement the programs or policies that are being evaluated are invaluable. Implementing organizations can provide advice about study design and study interpretation.

This proved to be true for the Oakland physician-patient racial concordance study discussed earlier. I (Alsan) and my co-authors collaborated with implementing organizations to run focus groups with Black men that indicated that advertising the study as including injections, such as flu vaccines, could deter patients. This information led to modifications to the study advertisements, which ultimately improved recruitment and the study overall.

Interested in learning more about RCTs?

The additional benefits of RCTs beyond causality are numerous and can help us learn how to improve health policy. Read more about these benefits demonstrated by recent RCTs in the paper, Beyond Causality: Additional Benefits of Randomized Controlled Trials for Improving Health Care Delivery.

For the health policy and medical community excited to learn about lessons learned from randomized evaluations in health care delivery, recordings of our recent virtual convening, “HCDI at 8: Building on Eight Years of Randomized Evaluations to Improve Health Care Delivery” are now available.

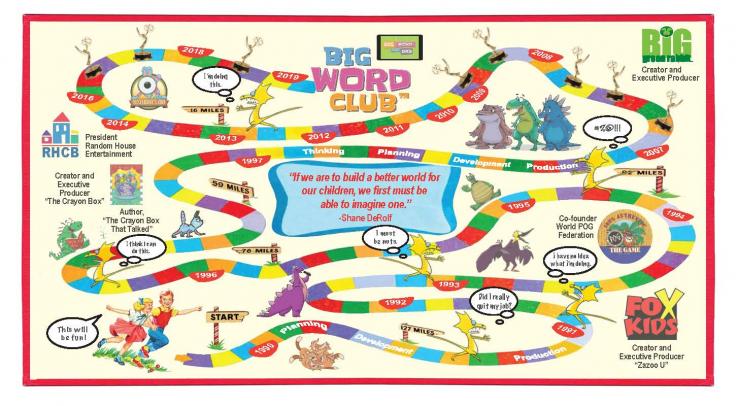

My name is Shane DeRolf.

I am the founder and CEO of Big Word Club, a digital learning company committed to improving the vocabularies of every kid in America.

It all started about 25 years ago when I quit my job to pursue a dream.

That dream was to create great media that helped kids think, learn and grow.

Here’s what those 25 years felt like…

Along the way, I’ve learned a lot.

About myself.

About life.

About business.

And though the dream has not changed, its focus has evolved.

It’s evolved from creating great media for kids to creating great media for kids that addresses and solves a large, unmet problem.

Closing the Word Gap

According to a landmark study by Hart and Risley in 1995, children’s vocabulary skills are linked to their economic backgrounds. According to Hart and Risley, children from lower socioeconomic homes may hear as many as thirty million fewer words than children from higher socioeconomic homes by the time they are three years old. This is commonly called the “word gap.” Because kids learn by hearing, they don’t learn what they don’t hear. This translates into a gap of 400-700 words in the vocabularies of low-income kids and their more privileged classmates.

Because kids who start behind tend to stay behind, we decided to try and create a vocabulary intervention that…

• Kids loved.

• Was fast, fun, and easy to implement by both teacher and parent.

• Was easily scalable and inexpensive.

• Improved the vocabularies of preschool and kindergarten students.

And that’s exactly what we did with Big Word Club. (Watch a short video.)

Problem was, nobody believed us.

Turns out anecdotal evidence doesn’t carry much water in the education market. Despite the fact that we successfully launched a pilot in 1,000+ preschool and kindergarten classrooms to rave reviews and converted 20 percent of our pilot teachers into paid subscribers, the truth was we lacked the scientific evidence we needed to provide school districts with the confidence to buy Big Word Club for their students.

The Moment of Truth

The minute we realized that if Big Word Club was going to be successful and help the millions of kids we envision helping, we knew we needed to get the evidence that proved it works.

As luck, life, or serendipity would have it, it was about this time that I was introduced to J-PAL and made my pitch to Vincent Quan, a Senior Policy Manager at J-PAL North America. It went something like this:

“Every year, millions of kids from lower socioeconomic homes are entering preschool and kindergarten knowing hundreds fewer words than their more privileged classmates. Kids who start behind tend to stay behind and these kids never reach their potential. We believe Big Word Club can help and we’d like your help to prove it.”

Fortunately, J-PAL agreed that the promise of Big Word Club was worth the cost of funding a randomized evaluation and assembled a world-class team from the University of Chicago and University of Toronto to implement the evaluation.

The Scary Part

Though we could not have been more excited than to be part of a randomized evaluation funded by a leading research center at MIT and implemented by rock stars of academia, we also knew that if the results were not good, we would close the company and many years of hard work and millions of investment dollars would be lost.

So it was a scary time.

It was also the smartest thing we ever did as a company.

Over the last eighteen months, the Big Word Club program has been the focus of a randomized evaluation to measure its impact on preschool and kindergarten students' vocabularies. The evaluation was implemented by Professors Ariel Kalil and Susan E. Mayer, along with the invaluable assistance of project manager Michelle Michelini of BIP Lab at the University of Chicago and Professor Phil Oreopoulos of the University of Toronto. The research team also helped us design a customized vocabulary assessment based on the Peabody Picture Vocabulary Test-IV.

As the founder and CEO of a company undergoing a randomized controlled trial, those eighteen months were some of the most difficult of my career—not because of what we had to do but because of what we could not do.

As a company, we felt that because we were committed to a randomized evaluation, we needed to wait until the results came in before we could, in good conscience, aggressively sell Big Word Club in the market or fundraise.

Fortunately, when the results came in, it was good news and full of exciting observations. Below are some of the highlights:

• In percentile terms, results show that a child at the 50th percentile would experience up to a 13 percent gain on average, jumping to the 63rd percentile after having access to Big Word Club after 17 weeks.

• A child at the 80th percentile would experience up to a 7 percent gain on average, jumping to the 87th percentile after having access to Big Word Club after 17 weeks.

• Unlike other vocabulary interventions that are expensive and require significant teacher training, Big Word Club is low-cost and requires no teacher training.

What We Learned

• We learned what we can do to improve the effectiveness of our program when developing future content (this was invaluable and unexpected).

• We learned how to work better with incredibly smart people that think differently than we do.

• We learned how to be more patient.

What’s Next for Big Word Club

Now that we are truly an evidence-based intervention, we can market Big Word Club with confidence to school districts, elementary schools, daycare centers, Head Start programs, teachers and parents. And that’s exactly what we plan to do!

We are also exploring strategic partnerships and investment opportunities with individuals, companies, and organizations that can help Big Word Club do more good faster.

By improving a child’s vocabulary, we improve his or her chances for a successful and happy life and that, to us, is a job worth doing.

Scaling up programs that have strong evidence of effectiveness is a vital but often complicated step along the evidence-to-policy journey, and J-PAL has learned a lot about collaborating with policymakers to translate research into large-scale action. In this blog post, Iqbal Dhaliwal (Global Executive Director), Samantha Friedlander (Senior Communications, Policy, and Research Associate), and Claire Walsh (Associate Director of Policy) draw on more than a decade of experience worldwide to share four key lessons for forging successful scaling collaborations.

Scaling up programs that have strong evidence of effectiveness is a vital but often complicated step along the evidence-to-policy journey. Ultimately, scaling is both a science and an art, and after a decade of successes and setbacks in partnerships for scale, J-PAL has learned a lot about collaborating with policymakers to translate research into large-scale action.

J-PAL has devoted considerable resources over the past two decades to the science part of the scaling equation—conducting replication studies, testing multiple versions of programs, and designing randomized evaluations to identify key mechanisms driving impact in order to understand when findings generalize (or don’t) from one context to another.

In a book chapter recently published in The Scale-Up Effect in Early Childhood and Public Policy: Why Interventions Lose Impact at Scale and What We Can Do About It (Routledge 2021), we dive into the ways in which scaling is also an art—one that takes a coalition of committed partners with diverse expertise and political savvy working together to make change happen. (The full volume, which explores both threats and enabling factors for scalability in the early childhood field, was edited by John A. List, Dana Suskind, and Lauren H. Supplee.)

Our chapter, “Forging Collaborations for Scale: Catalyzing Partnerships Among Policy Makers, Practitioners, Researchers, Funders, and Evidence-to-Policy Organizations,” recognizes that despite growing interest in evidence-informed policy making, we still have a long way to go to fully understanding how to scale up effective programs. Successfully scaling up an evidence-informed program or intervention requires (at minimum):

- a deep understanding of both global evidence and the specific local context and systems,

- a policy window in which change is possible,

- political will to change the status quo,

- adequate funding resources, and

- capacity to monitor and implement the program well.

Because no single organization’s mandate covers all of these conditions, collaborations between policy makers, researchers, practitioners, funders, and evidence-to-policy organizations can make it more likely that these scale-up conditions are met.

We draw on more than a decade of J-PAL’s experience worldwide to share four key lessons for forging successful scaling collaborations.

- Invest in long-term partnerships and develop the resources to respond to policy windows in real time. Nearly all of the scale-ups to which J-PAL has contributed happened in the context of a multi-year partnership that included a mix of collaborative research, capacity building, and policy work. Although it may seem counterintuitive, investing in these long-term partnerships is the key to quick response times when new evidence-to-policy windows arise, as you can only learn about these opportunities in the first place if you have established trust and lines of communication. This is the art part—and requires a deep understanding of the strengths, incentives, and constraints of each collaborator. In our chapter, we discuss challenges to long-term thinking and how to overcome them.

- Use several complementary types of data and evidence to inform every scaling effort. Here, we touch on the science side of the equation, and discuss data sources including rigorous evidence of effectiveness, descriptive research, continuous feedback from participants and front-line implementers, administrative data, and a globally informed but locally grounded approach to evidence generation and use. Evidence from randomized controlled trials is crucial, but so are many other types of data in order to successfully scale a program. In our chapter, we discuss not only what data is useful but also when this data should be used (that is to say, continuously rather than just at the beginning or the end of a scale-up) and where it should come from (ideally, both global and local sources).

- Help institutionalize a broader culture of evidence-informed policymaking that goes beyond individual programs. Here, we especially drew on lessons learned from our government partnerships in Latin America. This type of data-driven culture can be fostered through innovation funds that incentivize evidence generation and use, embedded labs that create space for evidence to be used as a tool for learning, or policies that require the use of evidence as a step in the policymaking process. In our chapter, we discuss steps to support champions to build an institutional culture of data and evidence use.

- Leverage evidence-to-policy (E2P) organizations that can play a critical role in bringing different stakeholders together to make change happen. For example, more than 400 million people have been reached by programs that were scaled up after being evaluated by J-PAL affiliated researchers. Although collaborations can of course happen without a convener, E2P organizations can play a vital role in innovation, generating rigorous evidence, relationship-building, and institutionalizing systems for evidence to scale. In our chapter, we discuss factors that must hold for E2P organizations to be effective as well as the services that they can provide in return.

The partnerships from which we drew these lessons span years and continents, but they all demonstrate the importance of careful and intentional collaboration. We hope the lessons in our chapter can provide guidance to a variety of actors hoping to build collaborations with an eye toward scale. And we are honored to be part of a volume that includes so many thoughtful perspectives on how to best use evidence to scale programs effectively and reach as many lives as possible.