The next decade of RCT research: What can we learn from recent innovations in methods?

From Teaching at the Right Level to the multifaceted Graduation approach, J-PAL’s affiliated researcher network has helped to evaluate a diverse range of innovative interventions aimed at reducing poverty over the past twenty years. But the innovations aren’t limited to the interventions themselves. Researchers worldwide have also made important advancements in the methodology of randomized controlled trials (RCTs) to generate better quality evidence. At J-PAL’s 20th anniversary celebration at MIT in November 2023, we held a panel discussion on RCT innovations with researchers who are utilizing new data sources, analytical methods, and study designs to move RCT research forward. In this blog, we share insights from this session and reflect on the future of innovation in RCT methods.

20 years of innovation

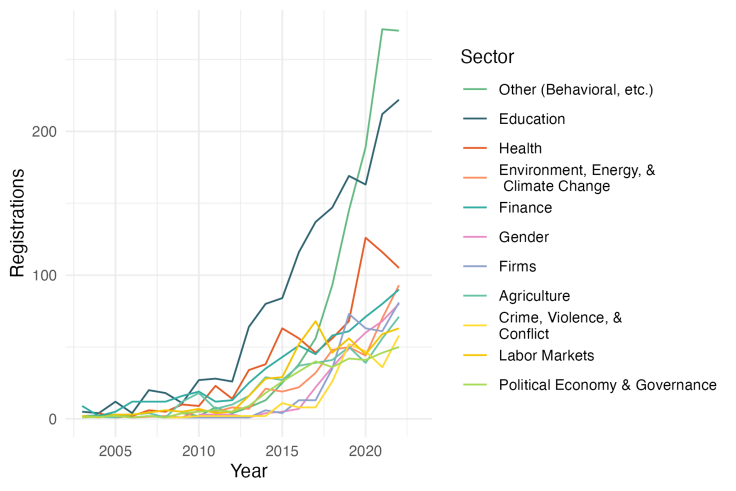

The rise in the number and geographic reach of RCTs in the social sciences over the past two decades is striking, expanding ten-fold to over 1000 studies annually across 167 countries in 2023 (as proxied by registrations in the AEA RCT Registry). This accumulation of evidence has promoted further learning and innovation across sectors by allowing researchers to continually build on existing studies and develop new techniques to create more robust evidence. During this period, we have seen not only dramatic increases in evidence generation, but also important advances in the way that we conduct RCTs to address new and existing challenges. Notable innovations cover a range of research areas, from access to new data sources to new approaches to experimental design and data analysis.

Four innovations moving research forward

Together with session moderator William Parienté, professor at UCLouvain and Chair of J-PAL’s Research vertical, we invited four panelists to present on innovations impacting how RCTs might be run in the next decade. To start, Craig McIntosh discussed cash benchmarking for cost-benefit comparisons across studies, Seema Jayachandran then presented her work on validating survey measures of abstract constructs, Poppy Widyasari advised on using administrative data in experiments, and, finally, Dean Karlan highlighted megastudies and multi-site studies to address incentive issues in large coordinated studies.

Cash benchmarking

Craig McIntosh, professor at the University of California, San Diego and co-chair of J-PAL’s Agriculture sector, emphasized a need to better inform policy-making by shifting from benefits-focused evaluation to include a more comprehensive consideration of costs. Much of the work on cost-effectiveness focuses on collecting cost data during or after implementation and comparing these figures across programs. There are, however, challenges in comparing diverse studies, where samples, outcomes, and scales can vastly differ. As a solution, Craig and his team sought to make more direct cost comparisons by designing cash transfer equivalents for in-kind child nutrition and workforce readiness programs to study how well cash transfers would perform against their non-cash counterparts. Because cash transfers have a clear cost and proven impact on multiple outcomes, they can provide an easily transferable comparison point for the cost-effectiveness of alternative interventions.

Looking toward the future, Craig proposed that eventually it would be possible to do a careful meta-analysis of cash transfer evidence to create a simulation tool allowing researchers to predict a distribution of cash impacts across a set of outcomes. This would reduce the cost of future research by simulating the impacts of an equivalent cash transfer treatment arm, and could be continually updated as new evidence comes in. There is still much to learn about the cost-effectiveness of cash transfers, with Craig using findings in his own studies to remark on potential difficulties, including cases where effectiveness may not increase at the same rate as cash distributed.

Validating short survey modules

Seema Jayachandran, professor at Princeton University and co-chair of J-PAL’s Gender sector, presented her work on developing a systematic way to identify survey measures most predictive of more abstract constructs like women’s empowerment. To measure these types of constructs, researchers typically design an index containing several close-ended questions on the topic. The issue is in knowing what questions to include in these modules. To address this, Seema and an interdisciplinary research team developed a two-stage process. First, they created a benchmark measure of women’s empowerment using qualitative data from in-depth interviews. They then used machine learning algorithms to identify items from widely used questionnaires that best predicted women’s empowerment when compared to their benchmark.

The index created from this study, while still context-specific, proved that it is possible to create short survey modules that provide valid measures of complex constructs. This model has a number of potential benefits, including reducing the cost of data collection and allowing easier harmonization of data across studies. Going forward, applying this model in different contexts to identify universally established indices for complex constructs could significantly enhance measurement consistency across different studies and sectors.

Leveraging administrative data

The growing accessibility of administrative data in recent years presents a promising avenue to facilitate intervention delivery, monitor program implementation, and measure outcomes at increasingly large scales. Poppy Widyasari, Associate Director of Research at J-PAL Southeast Asia (SEA), demonstrated how J-PAL SEA has used administrative data to mitigate measurement error and improve cost efficiency at different stages of the research process. Recognizing that successful partnerships often require initial investments in infrastructure, Poppy provided insights for navigating the complexities of obtaining and integrating administrative data into research projects. This includes creating a plan for accessing data, preparing for data lags, and building partnerships with data providers to work toward shared goals.

Administrative data may often be collected by governments and other agencies at broad scales, such as tax filings or school records. Planning ahead to take advantage of these sources is key to successfully leverage administrative data in randomized evaluations. Poppy also underscored the importance of relationship building, highlighting establishing trust with government partners as a critical first step. The use of administrative data allowed J-PAL SEA to pursue studies on a national scale in Indonesia, something that would be extremely costly and time consuming to do through traditional survey methods. In these studies, the research teams were able to use census data to identify eligible beneficiaries, banking data to monitor program implementation, and social security transaction data as an intervention outcome.

Megastudies and multi-site studies

Dean Karlan, professor at Northwestern University, proposed two solutions to improve the replication, validation, and synthesis of lessons learned across experiments: megastudies—in which many interventions are implemented at once targeting the same outcome in the same population—and multi-site studies—in which the same intervention is implemented across different populations. Currently, most evidence synthesis is done through meta-analyses, but this can lack the oversight of the research process needed to make clear comparisons between studies. Megastudies and multi-site studies coordinate data collection efforts and costs to build out additional research, taking advantage of large sample sizes across multiple locations to enhance validity and reduce variation across experiments.

Although megastudies and multi-site studies may seem prohibitively costly, Dean and the panel noted that there are opportunities to innovate for more efficient studies. One example is leveraging administrative data from platforms like social media and mobile banking, where the fixed costs of obtaining data can be covered by a single party, and repeated studies by other researchers can build off of these data structures. Another is partnering with large NGOs and international organizations that operate across countries, where additional interventions can be overlaid on existing programs across multiple sites, easing the cost of one extra site and offering tremendous opportunity for scaling research coordination.

The future of RCT research

While attempting to cover the full range of innovations over the past twenty years would require an encyclopedia-length blog post, it’s worth acknowledging some of the notable innovations that we weren’t able to cover in our panel. The adoption of machine learning techniques has allowed researchers to take advantage of larger and more complex datasets as well as improve our understanding of treatment effect heterogeneity and causal inference. Experiments may also now test large sets of interventions at once or contain an adaptive component for more tailored interventions. Making use of the large stores of existing evidence, there are also innovations that aim to aggregate lessons across studies and enhance research transparency and reproducibility.

These and collective insights from the panel underscore innovations in RCT methods that continue to evolve and propel the methodology forward. These novel approaches not only showcase progress made to date but also point toward collaborative and systematic approaches that will shape the future of RCT research. As we navigate challenges and embrace changes to the methodology, these innovations present promising solutions to enhance the generation, synthesis, and policy impact of evidence. With ongoing shifts in the landscape of RCT research that may have seemed like a moonshot twenty years ago—like ways to measure long-term and large-scale impacts, explore more ethical and accurate data collection, and introduce new data sources—we look forward to the future of this work to push the frontiers of research and learning.

On November 3, we hosted an event at MIT in Cambridge, MA, that brought together a diverse group of partners to reflect on two decades of progress and look to the future. The event showcased perspectives of people engaging directly with evidence-informed policies, featuring program participants and implementing partners sharing their stories and their reflections on J-PAL at 20.

We opened our doors in 2003 with a mission to reduce poverty by ensuring that policy is informed by scientific evidence. In our first decade, we set out to build a global infrastructure for rigorous research in new contexts. In our second decade, we worked with hundreds of partners to transform how evidence is applied to policy change and strengthen researcher and implementer capacity—all with the goal of improving lives around the world.

On November 3, we hosted an event at MIT in Cambridge, MA, that brought together a diverse group of partners to reflect on two decades of progress and look to the future. The event showcased perspectives of people engaging directly with evidence-informed policies, featuring program participants and implementing partners sharing their stories and their reflections on J-PAL at 20.

At the event, government and NGO partners, funders, and researchers in the J-PAL network joined our leadership, staff, and alumni for a series of short panel sessions and talks, followed by small-group discussions on pressing issues facing the field of international development and social policy themes, and concluding with a fireside chat with J-PAL directors. The sessions provided platforms for listening, connecting, and charting a path forward for the next decade.

Learning from global voices

The morning agenda featured sessions led by evaluation participants, activists, and global thinkers who shared perspectives and transformational stories from their journeys of social impact.

Links to video recordings of these sessions are coming soon—stay tuned.

The journey to J-PAL at 20

Hassan Jameel, vice chairman of Community Jameel, kicked off the day with remarks on the ambitious vision shared by Mohammed Jameel, Abhijit Banerjee, and Esther Duflo.

“Sometimes when we look back and see the Nobel Prize, seven offices around the world, hundreds of staff and affiliated professors, 600 million lives touched, it can be tempting to think that this was all going to turn out this way,” Hassan said.

“But the courage and the vision of that small group of people and a lot of hard work by J-PAL's staff, partners, and researchers are truly the bedrock of these towering achievements.”

J-PAL Director Esther Duflo (MIT) followed Hassan with brief remarks setting the stage for the day.

“In a typical birthday celebration, we would reflect on the past and say how wonderful we are,” Esther said. “And I think we are pretty wonderful and awesome, but I also think we kind of know that, and maybe it's not necessary to spend another few hours to repeat it. So what I really wanted to do this morning is to step back.

"There are not going to be many researchers on stage today except in moderating capacity. We keep talking about the movement—the movement is made of people who experienced poverty, of people who work directly with people who experience poverty, and I want to spend the morning hearing from them. …My hope is that it is going to give us, collectively in this room, both the energy and the charge for what we need to do in the next one month, one year, ten years, twenty years, and so on.”

High-quality education for all

In this session, speakers reflected on their frontline experiences leading transformative education initiatives. Pratham’s Faiyaz Ahmed, Rukmini Banerji, and Nuzhat Malik discussed unexpected challenges and successes implementing Teaching at the Right Level in India, and J-PAL affiliated professor and Health sector Co-Chair Pascaline Dupas (Stanford) moderated a conversation with Gifty Amaney and Stellina Pana, both field consultants at Innovations for Poverty Action, on expanding access to girl’s secondary education in Ghana.

The session wrapped up with a video talk from Deborah Danso, a pharmacy technician in Ghana, on the role that education played in her life as the recipient of a scholarship program evaluated by Pascaline and her colleagues.

Reframing the narrative

Photographer and filmmaker Ami Vitale spoke about her journey from being a war photographer covering conflicts around the world to documenting wildlife and environmental stories in support of environmental conservation.

“I realized these are the stories that really give us the sense that those caught in the conflicts don't stop dreaming and hoping for a better future. It's often in the face of our greatest challenges that humanity, ingenuity and creativity often can light a path forward… what happens next is in all of our hands,” Ami said.

“When I look out at all of you, I realize it is an impressive group of people who are used to pushing the envelope. And if the people in these stories do so much with just resilience and imagination and ingenuity, what are we all going to accomplish together?”

Poverty, the environment, and climate change

J-PAL affiliated professor and Environment, Energy, and Climate Change sector Co-Chair Kelsey Jack (UC Santa Barbara) introduced this session, which featured conversations on responding to the adversities created by climate change with Ami Vitale; James Mwenda, a Kenyan conservationist and entrepreneur; and Gregory Chen, managing director of BRAC’s Ultra-Poor Graduation Initiative.

Hindou Oumarou Ibrahim, president of the Association for Indigenous Women and Peoples of Chad, spoke via video on the impact of climate change on people experiencing poverty in central Africa, emphasizing the importance of global support for locally-grounded solutions.

My epic failures

J-PAL Director Ben Olken (MIT), co-chair of J-PAL's Social Protection sector, spoke about the importance of risk-taking in generating successful research that informs real-world policies and programs.

The research process is much more iterative than you think," Ben said. "Every project that you try doesn't work, and actually, it shouldn't work. We should be going through the process of making sure what we find actually is connected to the realities on the ground, that it's practically feasible, and that it's consistent with the policies that we're trying to do. Having that flexibility and adaptation is really important. If we aren't failing, we're probably not taking enough risks.”

Poverty amidst plenty: The good fight in the United States

Janti Soeripto, CEO of Save the Children US, moderated a conversation on fighting poverty in the United States with Maryann Broxton, main representative to the United Nations, International Movement ATD Fourth World, and Ashleigh Stocton, volunteer community lead with Save the Children Action Network (SCAN). Maryann spoke about the challenges of measuring poverty in the United States and lessons taken from her experience conducting poverty research.

Ashleigh discussed SCAN’s work in advocating to US elected officials for children to have a healthy start in life.

“Because children don't have a voice, they can't go and talk to our elected officials,” Ashleigh said. “So we have to be that voice for them.”

Transforming communities from the inside out

Marcelo Rocha, known as DJ Bola, is the founder of A Banca, a youth organization in São Paulo, Brazil and joined the event via video to share his work on community-led initiatives in Brazil and the importance of building dialogues between peripheries and city centers.

Working with “quacks:" The challenges of unregulated health care

Abhijit Chowdhury, professor and chair of Hepatology at the Indian Institute of Liver and Digestive Sciences, and J-PAL affiliate Jishnu Das (Georgetown University) discussed lessons from their work training informal health care providers in India.

In a video interview, Tarun Chakraborty and Tanmay Majumda, informal health care providers in West Bengal, shared their commitment to providing high-quality care and their interest in learning more up-to-date health care practices.

Afternoon breakouts: Mapping a vision for the future

In the afternoon, breakout discussions focused on specific thematic areas. J-PAL academic leads gathered with close partners to discuss emerging priorities related to corporate responsibility, intersections between agriculture and climate change research, opportunities for scaling child health interventions, fostering policy change and the scaling of promising programs, and the progress and next steps of J-PAL’s Social Protection Initiative.

In the session on agriculture and climate change, anchored by Kelsey Jack and Agriculture sector Co-Chair Tavneet Suri (MIT), participants discussed critical areas in climate policy that could benefit from more robust evidence. At the intersection of climate and agriculture, adaptation best practices for smallholder farmers in low- and middle-income countries was a key point, particularly in light of emerging international trade regulation like Carbon Border Adjustment Mechanisms and laws limiting trade from deforested land.

Two simultaneous panel sessions focused on insights related to artificial intelligence and experimental research methods.

AI: Emerging challenges and opportunities

This panel brought together thought leaders to discuss the likely impacts of evolving AI technology on social and economic issues including productivity, growth, and labor markets. There was particular focus on AI’s evolving role in education and health in high-, middle-, and low-income economies. J-PAL Global Executive Director Iqbal Dhaliwal moderated the panel, which featured J-PAL affiliate David Autor (MIT); Gita Gopinath, first deputy managing director of the IMF; Brigitte Hoyer Gosselink, director of product impact at Google.org; and Secretary S. Krishnan of India’s Ministry of Electronics and Information Technology.

Innovations in RCT methods and perspectives for the next decade

This session convened experts to discuss recent developments in RCT methods. J-PAL affiliate and Chair of J-PAL’s Research vertical, William Parienté (Université Catholique de Louvain), introduced the session and moderated discussions with Poppy Widyasari, associate director of research at J-PAL Southeast Asia; Dean Karlan (Northwestern); and J-PAL affiliates Seema Jayachandran, co-chair of J-PAL’s Gender sector (Princeton) and Craig McIntosh, co-chair of J-PAL’s Agriculture sector (University of California, San Diego).

Speakers discussed developments in the analysis and design of rigorous research, innovations in measurement and data accessibility, and advances to improve the comparability and generalizability of randomized evaluations.

The future of J-PAL and experiments in social policy

The afternoon breakouts were followed by remarks from Bengt Holmström, professor emeritus (MIT) and former chair of MIT’s Department of Economics.

Bengt said, “It is really terrific to be here because I've seen J-PAL from the beginning, and I have been excited about J -PAL from the beginning… I did forecast it would be a success, but I did not forecast it would be this dramatic and fantastic,” continuing on to commend the passion and focus of J-PAL’s founders and the organization’s ability to scale its model successfully.

Following Bengt’s remarks, Iqbal Dhaliwal, Esther Duflo, and Ben Olken reflected on our past decade of work, their vision for J-PAL going forward, and discussed emerging topics and challenges.

Discussing highlights of J-PAL’s last decade, they noted the expansion of J-PAL’s researcher network in both numbers and diversity and the vast expansion of government partnerships and policy engagements. They also noted the strength of J-PAL’s alumni network, with former staff moving on to roles in government, foundations, and civil society, expanding and elevating evidence-driven policymaking beyond J-PAL.

Esther spoke about the importance of expanding education and training to reach the next generation of researchers and policymakers.

“With demand for evidence continuing to grow, the world needs many more people with the capacity, ambition, and opportunity to make change,” Esther said.

As J-PAL looks to our next decade, we’re exploring new ways to equip people around the world with the tools to innovate, test, and scale—more often than not with no direct J-PAL involvement. The work ahead requires a truly collaborative effort to engage new audiences and partners, and work with existing partners in new ways, to breathe life into this vision, help shape it, and put it into action.

The way forward

The day wrapped up with a shared call to action by MIT Corporation Chair Mark Gorenberg, who spoke about J-PAL’s “straightforward but profound” mission and emphasized J-PAL’s leading role in MIT’s efforts to empower vulnerable communities and help society combat climate change.

We come away from this event inspired and grateful for the opportunity to connect with many people in our broad community, and eager to engage with more of you in the year ahead.

Videos of the above sessions will be posted on our YouTube channel in the coming weeks—stay tuned! In the meantime, subscribe to our eNews to learn more about emerging priorities and new directions as we enter our third decade.

The AEA RCT Registry serves as a central repository for information on planned, ongoing, completed, or withdrawn randomized trials in the social sciences. We're celebrating it's tenth anniversary by using the wealth of data (updated every month in the AEA Registry Dataverse) from ten years of registrations to draw out ten new insights from the Registry.

By the age of ten Mozart famously had already composed a symphony. While by its tenth birthday last year the American Economic Association (AEA)’s Randomized Controlled Trial (RCT) Registry hadn't quite done that, it did contain over 7,400 registrations from approximately 7,700 PIs and took a major step forward in the ongoing march for greater transparency and credibility in the social sciences; we might be biased, but we think that's equally deserving of prodigy status.

The AEA RCT Registry was founded in 2013 as a collaborative project between J-PAL and the American Economic Association. The Registry serves as a central repository for information on planned, ongoing, completed, or withdrawn randomized trials in the social sciences. Though the primary goals of the Registry are to i) combat publication bias and increase transparency in the social sciences and ii) facilitate meta-analyses (see more in our trial registration research resource), a database of thousands of social science trials can be a fantastic tool for looking into broad trends in social science experimental research.

Today, we celebrate the Registry’s tenth anniversary by doing just that—using the wealth of data (updated every month in the AEA Registry Dataverse) from ten years of registrations to draw out ten new insights from the Registry.

Insights 1–5: A growing Registry

(1) The overall volume of registrations has increased dramatically, and (2) so has the proportion that are pre-registered.

Since its inception in 2013, the Registry has grown to include over 7,400 randomized experiments in over 100 countries. As of 2023, the Registry receives nearly 1,000 new registrations a year, with no sign of plateaued growth yet.

Of those registrations, our second insight is an increasing proportion of pre-registered entries (those registered before their intervention start date).

With just a couple of exceptions, this trend has been consistent since 2013, moving from about 30 percent of new registrations in 2013 to over 56 percent in 2021 and 2022, which could suggest a growing norm of pre-registration in the social sciences. Pre-registration can help improve the credibility of trials by laying out key design and analysis decisions before data is collected/seen. So the rising share of trials that are pre-registered is an encouraging trend.

The geographic diversity of the Registry is also increasing, with continued growth in the number of unique countries with (3) trials and (4) PIs on the Registry. But (5) the vast majority of PIs are still from Europe and North America.

Next, we look into the geographic distribution of both trials (Figure 2) and institutions of principal investigators (PIs) (Figures 3 and 4) over time on the Registry. Both initially show a striking increase in diversity.

In 2013, registered trials represented 21 distinct countries and PIs from nineteen different countries. This nearly doubled the next year to 37 and 34 countries, respectively, and the growth has continued: in 2023, registered trials represented 94 distinct countries with researchers from ninety countries.

Figure 3: Location of PI institutions over time

Zooming into the count of PIs, however, tells a more muted story. From Figure 4 we can see that while the number of PIs from regions other than North America and Europe has increased over time, the vast majority of PIs on the Registry continue to be based at organizations in those two regions.

Insights 6–8: An increase in “behavioral” experiments

(6) Registrations marked as “behavioral” have increased substantially over the past few years; this growth is (7) shared across all topic areas, and (8) not driven by a large increase in lab or survey experiments.

Our next insights focus on the sectoral range of trials on the Registry over time. Figure 5 shows the top-level keyword for trials registered in the AEA RCT Registry from 2013 to 2022. The most prominent feature of the graph is the dramatic rise in the number of trials tagged as focusing on “behavior,” especially from 2019 to 2022.

We explore this further in two ways. First, we check whether this has been driven by a disproportionate increase in behavioral trials in one or two sectors by plotting in Figure 6 the coincidence of other sectoral tags with “behavior” over time. While there are some smaller trends, such as a marked increase in trials marked as both “behavioral” and “gender” in the latter half of the decade, what is most striking is that behavioral trials seem to be increasing across the board, from agriculture to health to education.

We next look to see if the trend is driven by the type of randomized experiment being conducted. While many of the early trials registered on the Registry were field experiments, the Registry also includes randomized lab and survey experiments. Figure 7 shows the proportion of trials that contain text in one of their fields that signals they contain either a lab (or lab-in-the-field) experiment or survey experiment. While we see growing proportions of both over time, they are still a small percentage of the total trials registered.

Insights 9–10: Papers on, about, and using the Registry

(9) The ability to link papers to registrations is underutilized, but we can glean some information from those that are.

While we can see above that registration and pre-registration are becoming larger norms in the social sciences, updating registrations with post-trial information, like the status of the trial and links to any published paper/data, is still relatively rare—as of early 2023, only around a fifth of all trials one year past their registered end date had any post-trial information filled in. However, from the entries that have updated information, we can pull some insights from the linked papers.

We were able to pull information from over 750 papers linked on the Registry. As shown in Figure 6, the majority of those 750 are working papers. From there, we assigned a broad academic field to all published papers and all working papers published through an organization (e.g., NBER Working Papers).

While the vast majority are classified as economics (86.7 percent), there are papers from a wide array of disciplines, including public health (3.4%), political science (2.8 percent), education (2.3 percent), psychology (2.1 percent), sociology (<1 percent), and demography (<1 percent).

(10) We aren’t the only ones using Registry data!

Finally, as the Registry has continued to grow, attract new users, and become a standard for experiments across economics, it is improving in its function as a central database of those experiments. In the last five years, we have seen a marked increase in the number of papers that use the publicly available Registry metadata as either their main analysis data or as a supplement to it. So far, these can be grouped into a few broad sets.

The first, and as expected largest, group are those that use the Registry to study research transparency questions in the social sciences, either studying the Registry as a transparency object in itself (Garret and Miguel 2018; Abrams et al. 2020; Miguel 2021), taking it as a means to glean information on other transparency behaviors (Ofosu and Posner 2018 for pre-analysis plans and Laitin et al. 2021 for results reporting), or finally using it as an auditing tool, both for self-reported data (Garret et al. 2019) and the implementation of transparency policies (Buckley et al. 2022).

Second are studies that use the Registry data as a significant portion of a larger compiled dataset attempting to proxy for the universe of experiments and experimenters in a particular subset of social science research (Corduneanu-Huci et al. 2022 on the location of researchers conducting experiments, and Corduneanu-Huci et al. 2021 on the location and political economy of experiments).

The last group of studies uses the data to answer meta-scientific questions about the studies on the Registry (Leight et al. 2022 on publication bias in RCT research; Murtagh-White et al. 2023 on the possibility of automated evidence aggregation).

Looking forward to the next ten years

While we hope our ten insights were interesting, we only scratched the surface of what’s possible with the Registry data. We look forward to seeing more uses of the Registry data in the future, and more than anything to see how the Registry itself continues to evolve over the next ten years. In the meantime, please continue to register your trials, and use the Registry and its data for finding exciting experiments in your area of interest!

Women’s agency, or their ability to make and act on their choices for their lives, is an important concept in research and policy related to gender equality. Many policies aim to increase women’s agency, which could be a means for them to improve their health, economic security, and decision-making power within their household and community. While there are several validated long survey measures of women’s agency, in many cases, researchers seek a short measure. In a new research project, the goal was to design a validated short measure of women’s agency through an innovative method for survey module design.

In this post, J-PAL Gender sector chair Seema Jayachandran summarizes her recent paper with Monica Biradavolu and Jan Cooper.

Introduction

Women’s agency, or their ability to make and act on their choices for their lives, is an important concept in research and policy related to gender equality. Many policies aim to increase women’s agency, which could be a means for them to improve their health, economic security, and decision-making power within their household and community. In addition, increasing women’s agency is typically viewed as an end in itself.

Unlike physical characteristics such as height, women’s agency is a psychological construct, and it cannot be directly observed. For these reasons, it is challenging to measure quantitatively.

While there are several validated long survey measures of women’s agency, in many cases, researchers seek a short measure. The goal of this project was to design a validated short measure of women’s agency, suitable for north India and perhaps applicable elsewhere, through an innovative method for survey module design. The new method combines machine learning and semi-structured interviews, and we refer to it as MASI.

Richer ways to capture women’s agency, such as semi-structured interviews or real-stakes choice experiments, are not practical for most large studies. They are time-intensive, skill-intensive, logistically complex, or expensive. While these techniques can provide in-depth, nuanced data, a shorter, simpler survey module would allow more researchers to measure women’s agency across various contexts, particularly if agency is a secondary focus of the study. In our project, we used these richer ways to measure women’s agency as a “gold standard” to guide the choice of the best five quantitative, or close-ended, survey questions to use.

Approach

Preparing the long questionnaire and semi-structured interview guide

We identified the five best questions for measuring women’s agency—specifically agency within her household—from a large set of candidate questions. We constructed the set of candidate questions by combining close-ended questions from longer, existing surveys of women’s agency, and removing redundant questions. Questions were drawn from the Demographic and Health Surveys, Relative Autonomy Index, a J-PAL toolkit on measuring women’s agency, and the Sexual Relationship Power Scale. In total, we included 63 questions.

Next, we developed an interview guide for the semi-structured interviews. The questions covered six domains of women’s agency: education, fertility, mobility, health, employment, and household expenditures. Trained qualitative researchers conducted the interviews. We then applied qualitative coding methods to score each woman in each agency domain. We averaged these scores to arrive at an overall agency score. In the data analysis, we used this qualitative score as the benchmark against which we assessed the candidate quantitative questions.

Study sample

The study took place in Kurukshetra district in Haryana, India, a context with sizable gender gaps. For example, both overall in Haryana and within our sample, the female employment rate is below 20 percent.

Our sample of 209 women from 21 villages completed a semi-structured interview and close-ended survey module between February and May 2019. The average age of study participants was 30, and they had on average 10 years of education. All women in the study were married and had a child under the age of 10. This allowed us to compare women’s answers to similar questions, for example about their relationships with their husbands or decisions over children’s health. Note that this choice of a sample means that the measure of women’s agency is appropriate for partnered women with children, and not adolescents or other groups.

Data analysis: Narrowing down 63 questions to a five-question module

Which survey questions best predicted a woman’s “true” agency, as measured by her qualitative score? To find out, we used two statistical methods techniques to select the best ones. The primary method is LASSO stability selection. By running many LASSO regressions on random subsets of the data, this algorithm selects the five questions that correspond most closely with the qualitative score. (As a brief primer, LASSO is a “supervised machine learning” technique that differs from a standard regression in that the estimator sets some coefficients to zero to avoid the model over-fitting the data. From a full set of explanatory variables, only a subset are selected for inclusion in the statistical model). The proposed index of women’s agency combines the five questions into an index (by normalizing each to have a standard deviation of 1 and then averaging them).

To understand how sensitive the selected questions were to the statistical method, we also used a second method, called backward sequential selection. This method starts with the full list of survey questions and iteratively drops the one question that causes the smallest decrease in explanatory power over women’s agency when the included questions are combined into an index. The procedure stops when five questions remain in the index. These statistical methods for variable selection are similar to standard machine learning techniques except that the number of questions chosen is constrained to be five.

Results

We find that both LASSO and backward selection arrived at an index of women’s agency that is strongly correlated with the qualitative score derived from the semi-structured interviews. Table 1 reports the best set of survey questions to measure women’s agency, chosen based on their correspondence with the qualitative score. LASSO stability selection (column 1) is the preferred statistical technique. Overall, both of the statistical methods used selected a similar set of five questions that correspond quite closely to the qualitative score, with a correlation over 0.5.

| Question | (1) LASSO stability selection |

(2) Backward selection |

| Opinion heard when expensive item like a bicycle or cow is purchased? | 1 | 2 |

| Need permission from other household members to buy clothing for self? | 2 | 1 |

| Allowed to buy things in the market without asking partner? | 3 | |

| Permitted to visit women in other neighborhoods to talk with them? | 4 | 4 |

| Who do you consult with for decisions regarding your children’s health care? | 5 | |

| Allowed to go alone to meet your friends for any reason? | 3 | |

| Who in household decides to pay school fees for a relative from your side of family? | 5 | |

| Five-Question Index R2 | 0.289 | 0.287 |

Notes: The table lists the top five survey questions selected. The numbers in the cells in columns (1) and (2) indicate the selection order, with 1 referring to the best or most predictive question.

Three out of the five questions selected by LASSO stability selection (among 63 candidates) were also chosen by backward selection. When we consider ten-question versions of the modules, seven of the chosen questions overlap between the methods. This suggests that the results are quite robust to the specific method used. Moreover, the best fourth to tenth questions perform reasonably similar, so the biggest gains are from identifying and using the best three questions plus identifying the best ten questions and drawing the rest of the module from this set.

The five-question index is much more correlated with the “truth” than if one chose five questions randomly. More strikingly, the five-question index has more explanatory power than indices constructed from all 63 candidate questions, by averaging them or using principal component analysis to combine them. When we used the methods to choose the best N-question module, the performance of the LASSO stability index peaked at N=15 questions. Thus, when deciding whether to include three or ten questions, there is a tradeoff between survey length and quality of the measure, but after a certain point, adding more questions is not helpful. What is key is to identify the best survey questions, those that are information-rich, rather than adding more.

Interestingly, none of the general questions that ask a woman to assess her overall agency or perception of her power were selected. The top three questions chosen by LASSO stability selection ask about the woman’s role in specific purchase decisions: large household purchases, clothing for the woman, and items in the market. The other two questions pertain to agency over her physical mobility, specifically whether she can visit women in her neighborhood without permission, and her children’s health care.

Real-stakes choice experiment inadequate for measuring women’s agency

In addition to the qualitative interviews, we tested a “lab-in-the-field” game to measure women’s agency over household income. The game entailed a series of real-stakes choices a woman makes between money for herself or her husband. A potential advantage of a real-stakes choice is that it might be less susceptible to bias from respondents giving disingenuous answers they deem to be socially desirable.

The game did not work effectively in our study. The premise of this game is that a woman with less agency will more often choose money for herself because she would not have influence in how money given to her husband is spent. The key assumption is that women with low agency want more agency. However, some women with very low agency never want money for themselves out of a belief that money is men’s domain or fear of their husband’s reaction. This made behavior in the lab game a noisy measure of women’s agency. While we do not draw general conclusions about the effectiveness of lab games versus qualitative interviews, in our study, the qualitative approach proved superior. Its other advantage is that it covers more domains of agency than financial agency.

Implications and Recommendations

Benefits of the module

The five-question module of women’s agency, validated against semi-structured interviews, is a valuable new resource for measuring women’s agency. Some of the questions, for example on the ability to influence household purchases, seem fairly universal and conceivably would apply in contexts outside north India. Others related to the ability to visit friends might be more relevant in India than contexts with fewer restrictions on women’s mobility. Two of the questions are specific to married women, or women with children, the population for whom we designed the measure.

A natural direction for future work is to replicate the study to create short modules appropriate for other contexts and to assess the extent to which the same questions are selected elsewhere. One could also apply our method to design a “universal” module based on how robustly it predicts qualitative interview scores across multiple contexts. Widespread adoption of a common five-question module would allow for better comparisons of women’s agency across data sets; researchers, of course, could add many other questions tailored to their needs.

Another next step is to apply the five-question measure in impact evaluations to assess if it captures changes in agency that come about through policy interventions.

Benefits of the methodology

The study introduces a novel, mixed-methods way of developing a survey measure by using statistical methods to choose quantitative questions benchmarked against a qualitative measure. This new method, called MASI, could be applied to create survey modules for other complex concepts besides women’s agency. Many concepts—financial insecurity, cultural assimilation, trust in authority—are best measured with open-ended questions, yet there is a practical need for close-ended measures of them. Future research could apply the new MASI method to create survey modules for other nuanced constructs.

Lessons Learned

- Using qualitative interviews as a statistical benchmark can be valuable when designing short modules to measure complex concepts like women’s agency.

- More specific survey questions were more correlated with women’s agency as expressed in the qualitative interviews than were women’s overall assessments of their power.

- For measuring women’s agency through surveys, the key is to choose the right survey questions. Our analysis finds that after using the best 15 questions, adding more questions does not improve the measure. (With fewer than 15 questions, there is a tradeoff between a shorter survey module and a richer measure.)

- Lab games or other measures that assume that women with low agency have a desire to increase their agency might not work well in some contexts, such as north India.

Read the full paper for more information on this study and a list of all references.