The road to rigorous evaluation: training partnership builds use of evidence in California

This guest post was originally published on the State of CalData blog on February 25, 2022. The post shares reflections from Joy Bonaguro, California Statewide Chief Data Officer, and Jason Lally, Deputy Chief Data Officer. In September 2021, CalData and J-PAL North America partnered to provide a training on Designing Rigorous Evaluations of Government Programs to participants from government agencies across California.

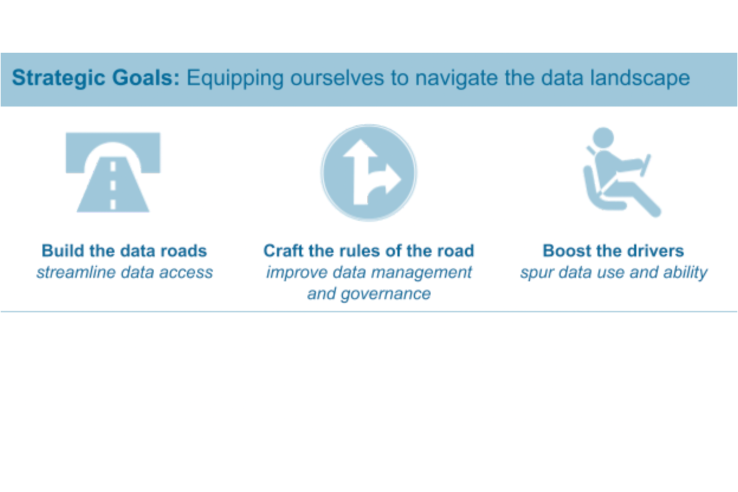

As part of our statewide data strategy, CalData is working to increase the capacity of the State workforce and leadership to appropriately use data and evidence to support their work. We want to improve the capacity to ask key questions, use data tools effectively, and approach evaluation across our workforce. Our partnership with J-PAL North America, a regional office of the Abdul Latif Jameel Poverty Action Lab (J-PAL), helped us move forward on building capacity for rigorous evaluation and data use.

Tailored training to fit departmental priorities

This September, CalData and J-PAL North America partnered to offer a training on Designing Rigorous Evaluations of Government Programs to participants from government agencies across California. J-PAL North America specializes in randomized impact evaluations and has partnered with government agencies across many focus areas and settings to conduct evaluations. Their work is grounded in relevant, real-world experience that comes through in the training they provided. We were excited to bring J-PAL North America’s depth of expertise to the State of California.

We encouraged departments to nominate teams of multiple staff to attend to build shared skills and collaborate on projects during the training. Participants came from a wide range of agencies: we admitted participants from the Department of Health Care Services, the Department of Human Resources, the Department of Public Health, the Strategic Growth Council, and other departments and groups.

The application process required each participant or project team to propose a specific project to work on during the training. This informed us of applicants’ learning needs and departmental priorities so that we could tailor the training. This process also helped set expectations for participants and prepared them for the time commitment and technical content of the two and a half days of training.

J-PAL North America Co-Executive Director Vincent Quan, who presented a session on generalizability and applying evidence in new contexts, shared his thoughts on the value of collaborating on this tailored training: “This opportunity with California was exciting because we are helping to contribute to a broader culture of evidence-based policymaking. Participants from state agencies were an active audience who were eager to apply what they learned, and it was encouraging for us to see them mapping plans for how to build and navigate data infrastructure.”

Tackling real-world projects during the training

Because each participant joined the training with a real-world project already in mind—and because most participants attended in groups with colleagues from their department—the training guided participants to apply concepts to their own specific projects. Group work sessions with assistance from CalData and J-PAL North America staff facilitated group learning and allowed teams to collaborate on concepts such as measurement strategy and randomization design.

On the final day, each project group presented their data and evaluation plans. For example, one group from the Department of Public Health presented ideas of how to measure case management strategies that encourage follow-up testing of children with elevated blood lead levels. Participants walked away from the training with new ways of thinking about data measurement and evaluation that they can apply directly in their own contexts.

Communities of practice and long-term organizational change

This training was an early test of how we might think about rolling out opportunities to inform a broader data literacy strategy. We were lucky to bring on new CalData team members to start planning the next steps following the J-PAL workshop, including supporting participants as they apply what they learned in the training and exploring the development of future evaluation trainings.

To ensure that our efforts are sustainable, it is important to build a shared understanding of ways to meet our data and evaluation goals. Staff turnover across agencies naturally creates challenges in maintaining continuity in our practices. Providing appropriate training, creating opportunities for connection, and sharing across organizational boundaries will help build this capacity and continuity. J-PAL North America helped participants understand evaluation methods and generate an understanding of when and how to use randomized evaluation. We plan to draw on these concepts to implement and evaluate evidence-based programs and policies that we can sustain over time.

To understand our approach to data literacy and other aspects of our portfolio, we encourage you to read the California state data strategy. To dive deeper into data use and impact evaluation, we encourage you to find inspiration in course offerings such as those at Data Academy in San Francisco, explore J-PAL’s Research Resources and Evidence to Policy pathways, and subscribe to the CalData newsletter.