Teaching resources on randomized evaluations

Summary

Since 2005, J-PAL has offered its Evaluating Social Programs course in a number of different locations worldwide. This week-long course provides a thorough understanding of randomized evaluations and pragmatic step-by-step training for conducting one’s own evaluation. In addition to offering the course in person, J-PAL also offers a free, online version in several languages. Teaching materials from the course are available in this resource page.

Why Evaluate

This lecture provides an introduction to impact evaluation, from the types of questions we can answer to how we can ensure that impact evaluations build on good program design and implementation.

Lecture materials:

- Why Evaluate slides (2024)

Theory of Change and Measurement

This lecture explores how impact evaluations build on our theories of change, how we define and measure key outcomes, types of data and where we can find them, and potential sources of measurement error.

Lecture materials:

Case studies on developing a theory of change and identifying appropriate outcomes and indicators:

- Summer Youth Employment Programs in the United States (Case Study)

- Shifting Students' Gender Attitudes in India (Case Study)

Why and When to Randomize

In this lecture, we present different impact evaluation methodologies, including randomized evaluations, and discuss which factors can influence the choice of one impact evaluation method over another.

Lecture materials:

Case studies illustrating the assumptions underpinning different impact evaluation methods:

- Piped Water Adoption in Urban Morocco (Case Study)

- Estimating the Impact of Climate Risk Insurance in Burkina Faso (Case Study)

How to Randomize

This lecture introduces random sampling and random assignment and illustrates how the research questions of interest and real-world constraints guide randomization design choices.

Lecture materials:

- How to Randomize slides (2024)

Case studies illustrating how the randomization design of an evaluation can be tailored based on the research question(s):

- Loans and Grants for Microenterprises in Egypt (Case Study)

- Promoting Economic Inclusion and Resilience in Niger (Case Study)

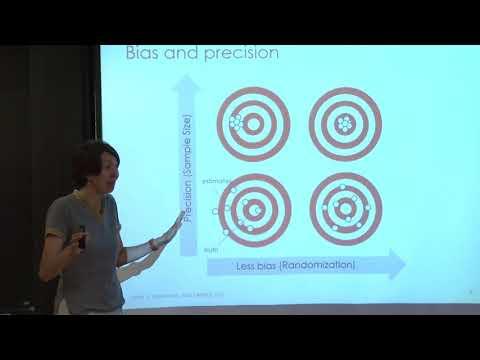

Sample Size and Power

This lecture introduces the concept of statistical power and walks through the factors that influence the power of a study, including the design choices introduced in How to Randomize and the outcomes determined in Theory of Change Measurement. The lecture slides below reflect two technical tracks. The Essentials of Sample Size and Power slides cover the intuition and basic principles behind power calculations while the Mechanics of Power slides cover the statistical framework for conducting power calculations.

Lecture materials:

Exercise on power calculations:

- An exercise that practices power calculations using EGAP’s web application and considers the trade-offs in designing a well-powered randomized evaluation.

Ethical Considerations

This lecture offers practical guidance for researchers and practitioners to use when thinking about ethics in study design and implementation, and how to apply that framework in real-world settings.

Lecture materials:

Threats and Analysis

This lecture illustrates some of the challenges that can occur while conducting a randomized evaluation and how researchers can approach these challenges in the implementation and analysis phases.

Lecture materials:

- Threats and Analysis slides (2024)

Case studies illustrating how to analyze and interpret the results of a randomized evaluation in the presence of various threats to analysis, such as attrition, spillovers, and non-compliance:

- Vocational Training in Colombia (Case Study)

- Rural Sanitation in Indonesia (Case Study)

Generalizability

How can results from one context inform policies in another? This lecture provides a framework for how to assess the applicability of evidence across contexts.

Lecture materials:

- Generalizability slides (2024)

Please note that the teaching resources referenced here were curated for specific research and training needs and are made available for informational purposes only. Please email us for more information.

Last updated July 2024.

These resources are a collaborative effort. If you notice a bug or have a suggestion for additional content, please fill out this form.

We thank Therese David for thoughtful comments and suggestions. We also thank the Training teams past and present of all J-PAL offices for their assistance in creating these materials. Any errors are our own.